Harnessing Waste Heat is the Latest Frontier in Data Center Efficiency

The increase in power-hungry AI hardware underscores the need for data centers to adopt waste heat reuse solutions.

May 7, 2024

Data centers have been on an energy efficiency drive for two decades. They continue to find ways to lower energy usage, cool more effectively, and reduce operational costs. The latest frontier in this endless quest is the capture and reuse of waste heat.

The quantity of waste heat is only going to increase in data centers as AI applications are added and more liquid cooling is used. This growing trend underscores the need for data centers to adopt waste heat reuse solutions, not only to enhance energy efficiency but also to mitigate environmental impact and operational costs.Top of Form

“Heat rejection from chips to the facility remains challenging,” said Peter de Bock, Program Director for the ARPA-E COOLERCHIPS Program.

While channeling waste heat out of the facility is one approach, it is far more efficient and environmentally friendly to utilize waste heat to generate power, steam, heating, or even cooling. This is a vibrant area of research.

“Facilities can reduce the cost burden and their carbon footprint by finding ways to harness waste heat,” said Kyle Mangini who looks after all laboratory and mechanical systems at the Amherst College Science Center.

Multifunction Campuses

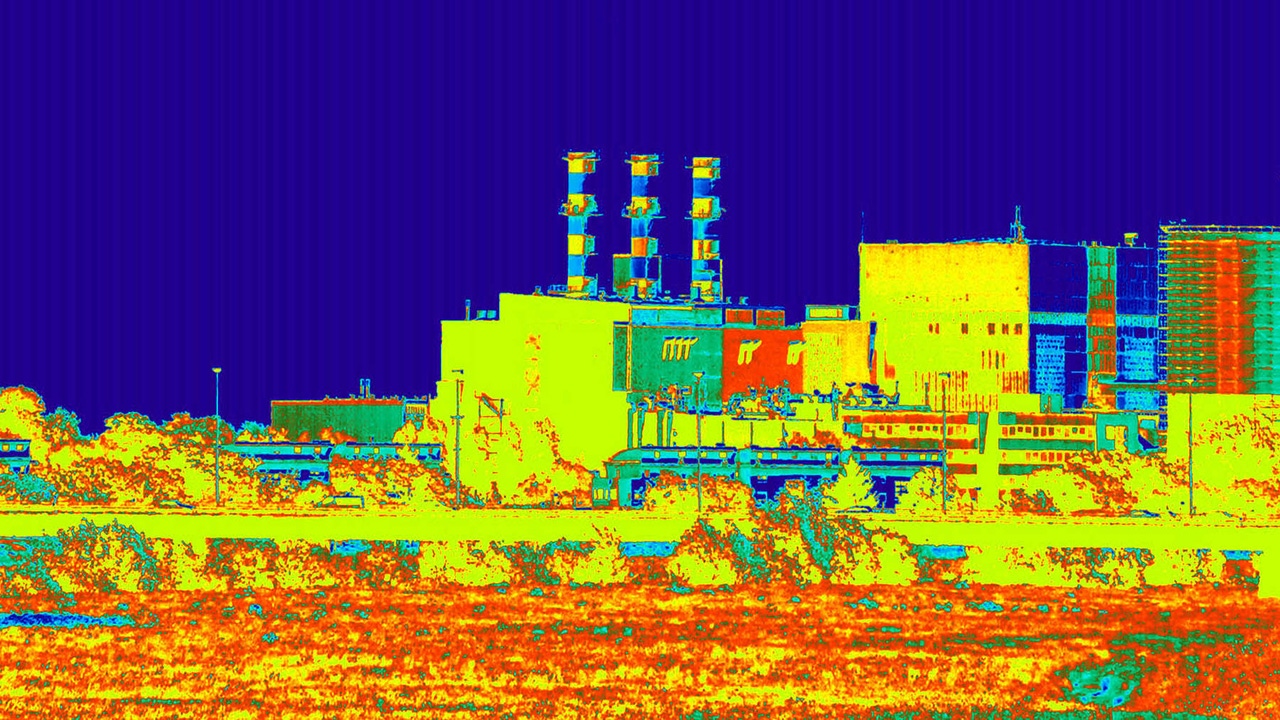

Brian Rener, Mission Critical Leader at SmithGroup, believes there are plenty of sustainability and efficiency opportunities when data centers are collocated within multifunction buildings or campus settings. Since data centers require continuous cooling, they expel large quantities of waste heat from servers and other equipment that can be harnessed in various ways to reduce overall energy consumption and further lower power usage effectiveness (PUE).

“As we expect a doubling of data center electricity consumption over the next couple of years, most data centers don’t have the option of moving to more northerly locations to take advantage of cool outside air as a way to improve sustainability,” said Rener. “Alternatively, they can gain value from their waste heat by collocating in mixed-used environments, within campuses or becoming more embedded within local communities.”

Build Smart

In the movie ‘Field of Dreams,’ Ray Kinsella (Kevin Costner) is told to, “Build it and they will come.” He does so – in the middle of an Iowa cornfield. That may have worked in the movie, but Rener doesn’t see that as the best place for a data center. Such a location makes it hard to maximize the value of waste heat. Instead, his advice is to seek out a larger community and have the data center be part of a broader energy solution.

Rener backs up his assertion by citing some data from the US Energy Information Administration (EIA) that shows space heating to be the largest single energy end use in commercial buildings at 32%. Cooling was only 9% but that number would be much larger in Southern states. A single large building consumes 1 MW for heating. 25 city blocks require 10 MW, and an 8,500,000 square foot commercial property needs 100 MW for heating alone.

Now factor in data center density. High-Performance Computing (HPC) and AI are driving rack densities to 100 kW and beyond. Aisles are being packed with processors with more power than ever.

“All processors are getting bigger and hotter,” said Shen Wang, principal analyst at Omdia. “Since 2000, the power consumption of processors has increased by 4.6 times.”

This certainly poses a serious problem in terms of power and cooling. But it also opens up an opportunity due to the sheer quantity of much hotter waste heat being emitted from chips and servers.

College Campus Cooling

Milwaukee School of Engineering provides a useful case study in waste heat reuse. It added a computer science building with a supercomputer inside known as Rosie. This Nvidia GPU-accelerated supercomputer helps students study AI, drones, robotics, and autonomous vehicles. While the supercomputer room occupies only 1,500sq.ft of the 65,000sq.ft structure, it consumes over 60% of its energy. As such, the system needed to be seamlessly integrated into the building and mechanical/electrical/plumbing infrastructure. The data center is designed for N+1 redundancy, allowing it to remain functional even in the event of a single component failure, thanks to multiple backup generators and cooling units.

The engineering team developed a symbiotic relationship between the academic building energy systems and the supercomputer systems. For instance, the computer room and academic building use the same cooling system during summer months, when the facility’s chilled water return line is used for data center supply. By elevating the chilled water return, cooling efficiencies for the whole building were increased. During the winter, when the academic building no longer requires mechanical cooling, the computer facility utilizes cold outside air via dedicated air-cooled roof-top condensers and integrated free-cooling circuits.

“The use of waste heat is key in raising data center and building efficiency,” said Jamison Caldwell, Principal Mechanical Engineer at SmithGroup.

As well as finding ways to harness waste heat from hot aisles, he pointed out the liquid cooling equipment also emits radiant heat. He works on the energy reuse factor which is calculated as a function of reused energy versus total energy consumed.

“There are numerous campus-level opportunities for waste heat,” said Caldwell.

NREL Energy Recovery Loop

The National Renewable Energy Lab (NREL) built an energy systems integration facility (ESIF) that was designed to match the heating demands of its labs and offices with its supercomputer-based data center to make the entire building more energy efficient. It has achieved a PUE of 1.04, making it one of the most efficient data centers in the world.

“100% of office heating is done through waste heat reuse, and water use has been cut in half,” said Caldwell. “If there is a sudden need for more heat, campus steam can be used.”

This is achieved courtesy of an energy recovery water loop that spans campus heating and cooling systems, supercomputing systems, and legacy IT systems, and gathers waste heat from both liquid and air-cooling systems. Caldwell characterized this as building-level energy exchange.

“Liquid cooling allows for higher grade hydronic heat recovery,” he said.

Incremental Gains

Liquid cooling could result in a major shift in data center cooling efficiency and bring about drastically lower PUEs – or at least prevent PUEs from rising as rack density soars. But Jason Matteson, vice president of customer solutions architecture at Iceotope, pointed out that the potential gains from liquid cooling could be squandered due to various areas of waste, including a lack of hot air containment, missing blanking panels, failure to replace power-hogging fans, and more.

“There is a lot of waste present in most data centers that we can eliminate,” said Matteson.

Read more about:

Green ITAbout the Author

You May Also Like

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)