How a New Two-Phase System Aims to Revolutionize Data Center Cooling

The Hybrid Mechanical Capillary-Drive Two-Phase Loop seeks to make traditional cooling a thing of the past.

Data Centers are booming. From Virginia to California, in rural spaces and near urban sprawl, we build data centers to reduce latency and match the demands for growing capacity. These overheads are only expected to increase as we embrace the newest technological leap: AI.

AI promises to solve the climate crisis, innovate healthcare, and help us reconnect with our past. And now, with generative AI tools like ChatGPT, the adoption of this new technology will only accelerate.

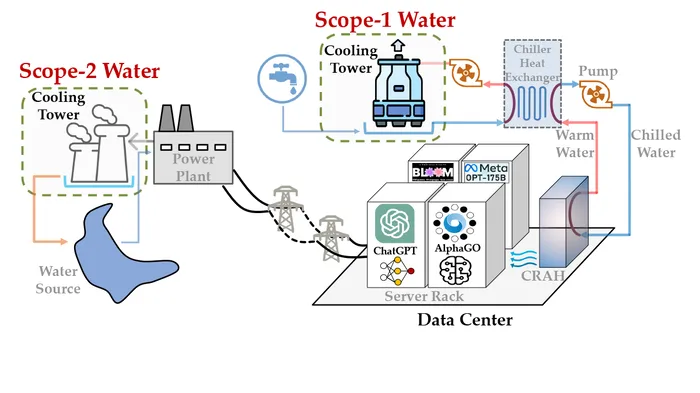

Inevitably, this boom raises questions about data center sustainability, particularly amid water shortages in places like Oregon and Arizona. Much of the environmental impact centers on one fact: processors get hot. Hot processers use a lot of energy and water, with our current cooling technology revolving around evaporative cooling. In Iowa, where Microsoft’s Data Centers trained OpenAI’s ChatGPT, the six West Des Moines data centers gulped 6% of the water in the district.

However, the COOLERCHIPS initiative from the US Department of Energy has sought to address these issues by funding promising and innovative technology “to reduce total cooling energy expenditure to less than 5% of a typical data center’s IT load at any time,” which should also reduce the CO2 footprint.

Out of the University of Missouri, one COOLERCHIPS project seeks to redefine the cooling landscape by making traditional evaporative cooling a thing of the past.

The Hybrid Mechanical Capillary-Drive Two-Phase Loop (HTPL)

The Hybrid Mechanical Capillary-Drive Two-Phase Loop (HTPL) is a two-phase cooling system. Like many contemporary data center cooling systems, it uses a liquid, like water, to cool a hot chip. The chip heats the liquid so it changes from a fluid to a vapor. This ‘phase change’ allows the vapor to carry the heat away from the chip to a place where it can cool and condense back into water.

In a traditional system, chillers evaporate water into the air to disperse heat away from the system and necessitate the use of fresh water to replenish itself. But HTPL is a two-phase closed system, meaning there isn’t a need for large-scale thirsty chillers, noisy rooftop evaporators, or cooling towers constantly fed by local fresh water.

Dr Chanwoo Park, project lead of the HTPL project at the University of Missouri, told Data Center Knowledge that “water consumption remains at zero throughout its operation, with the only exceptions being maintenance events.”

According to Dr Park, HTPL utilizes several innovative elements that remove the need for constant water replenishment. Firstly, it features an advanced, super-efficient heat handler in its evaporator with a large surface area, over 150 square centimeters, for moving heat around. It’s exceptional at managing heat – more than 300 watts per square centimeter – with its low thermal resistance (less than 0.01 K-cm²/W) when water is used as the cooling liquid.

HTPL-Concept

The evaporator also has an efficient design that disperses the liquid into a super-thin layer before turning it into vapor through its capillary heat pipes. It is a proven method in cooling electronics that works even better when the liquid flows against the heat. This achieves excellent cooling results at scale; Dr Park claims it can handle heat ten times better than regular cooling systems. In addition, the HTPL system can be made bigger or smaller as needed, so it can be used to cool bigger computer chips of the future – even if the chip is as large as 150 square centimeters.

However, the HTPL’s ability to work in passive and active modes makes it special. “In passive mode, it behaves like a loop thermosyphon,” Dr Park said, “while in active mode, it operates like a pumped two-phase loop. This flexibility allows it to switch modes as needed, ensuring reliability, performance, and energy efficiency.” This is similar to modern combustion car engines that shut themselves down temporarily while idling at a stop light to save energy.

Another technology that separates the HTPL from its competitors is its capillary-driven phase separation that allows thin-film boiling to work without flooding the boiling surface. “The HTPL system distinguishes itself through its exceptional energy efficiency, surpassing emerging liquid cooling technologies by a factor of 100,” Dr Park says. “This amazing efficiency is mainly because of how it boils the liquid in a way that uses very little energy for pumping and has a high capacity for absorbing heat."

With its ability to be scaled up or compacted, the HTPL system offers many possible cooling applications, from concentrated solar power to unmanned aerial systems. “It is particularly well-suited for applications where a compact, lightweight, and energy-efficient cooling system is essential,” Dr Park said.

The Pressing Need

Dr Susha Luccioni, a researcher at AI incubator Hugging Face and a founding member of Climate Change AI, argues that the environmental impacts of generative AI models are largely being ignored. Part of the problem is that they aren’t being measured, Luccioni said:

For instance, with ChatGPT, which was queried by tens of millions of users at its peak a month ago, thousands of copies of the model are running in parallel, responding to user queries in real time, all while using megawatt hours of electricity and generating metric tons of carbon emissions. It’s hard to estimate the exact quantity of emissions this results in, given the secrecy and lack of transparency around these big LLMs [Large Language Models].

With the available data, researchers at UC Riverside and UT Arlington tried to estimate water consumption using generative AI programs such as ChatGPT. Their paper, “Making AI Less ‘Thirsty’: Uncovering and Addressing the Secret Water Footprint of AI Models,” which has yet to be peer-reviewed, estimated that “ChatGPT needs to ‘drink’ a 500ml bottle of water for a simple conversation of roughly 20-50 questions and answers, depending on when and where ChatGPT is deployed.”

While a simple bottle of water might not seem like much, when the researchers consider the volume of interactions with ChatGPT and the other forms of generative AI for 2022, they estimated that data centers used about “1.5 billion cubic meters of water withdrawal in the US, accounting for about 0.33% of the total US annual water withdrawal,” or roughly double the water withdrawal of the country of Denmark. If the boom in data centers continues, the researchers suspect that water consumption by data centers will double again by 2027.

These estimates make the application of the HTPL all the more appealing. Its application in new and old data centers would significantly reduce the demand for water while reducing energy consumption, both major objectives of the COOLERCHIPS initiative.

According to COOLERCHIPS Director Dr Peter de Bock, “Facilities for large data centers are typically structures that are built for 15-20 years of use, and technology adoption might be modest at first as there is a large installed base of existing infrastructure.” Yet, the HTPL’s possibility of a 100-fold increase in efficiency and our era’s current generative AI boom may make its impact felt sooner rather than later.

Yet, as Luis Colon, senior technology evangelist for Fauna.com, the question remains what to do with the legacy equipment.

“The side-effect of replacing a lot of old iron – costlier, inefficient machines that run warmer, weigh more, and waste a lot of space for the computing power they provide. I hope the [COOLERCHIPS] program stresses the need for circular practices and incentivizes proper recycling since less than one-fifth of all e-waste is appropriately managed and recycled.”

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)