IBM Research Wants to Have Next-Gen AI Chips Ready When Watson Needs Them

The company's researchers expect to improve AI compute performance by 1,000 times over the next 10 years.

IBM wants to develop next-generation artificial intelligence chips, and it’s building a new AI research center and partnering with academia and other tech companies to do it.

At the recently announced future AI Hardware Center at SUNY Polytechnical Institute in Albany, New York, IBM researchers will collaborate with academic researchers and tech partners to develop, prototype, and test new AI chips and systems. Initial partners include Samsung, Mellanox Technologies, Synopsis, Applied Materials, and Tokyo Electron.

The IBM Research division, which has designed several prototypes of its Digital AI cores and Analog AI cores in recent years, will continue to develop these chips at the center, Jeff Burns, IBM Research’s director of AI Compute and director of the future AI Hardware Center, said. These new processors are expected to result in a 1,000-times improvement in AI compute performance efficiency over the next 10 years.

“We have a very aggressive roadmap,” Burns said in an interview with Data Center Knowledge. “The purpose of this center is to be in front of the needs of the Watson and IBM Systems divisions, so we can have things prepared for them to incorporate when the time is right.”

Nvidia currently dominates the AI chip market, but established companies and startups alike are developing their own AI chips to meet the needs of a fast-growing market that includes everything from machine learning and data analytics workloads in data centers to emerging AI-enabled edge applications, such as self-driving cars and consumer electronics.

Intel, for example, plans to ship two Nervana Neural Network processors later this year: one for training and the other for inferencing, which puts a trained model to work, drawing conclusions or making predictions; Amazon Web Services and Google have designed AI chips for their own data centers; two to three dozen venture capital-funded chip startups are also bringing AI processors to market, said Peter Rutten, research director with IDC’s Infrastructure Systems, Platforms and Technologies Group.

IBM’s decision to launch the AI Hardware Center makes strategic sense for the company, because it sells systems and wants their systems optimized for AI, he said. “That’s why they are doing semiconductor research. They have to solve the fundamental scientific problems that AI poses to the computing environment. You have to do fundamental research, basically start from scratch, figure out what AI requires and be open-minded – like they are doing with analog cores.”

IBM’s AI Chip Strategy: Digital and AI Cores

With the launch of the center, IBM and its partners aim to make advancements in a range of technologies, from chip-level devices, materials, and architecture to the software that supports AI workloads, Mukesh Khare, IBM Research VP of semiconductor and AI hardware, wrote in a blog post announcing the center.

“Today’s systems have achieved improved AI performance by infusing machine learning capabilities with high-bandwidth CPUs and GPUs, specialized AI accelerators, and high-performance networking equipment,” Khare wrote. “To maintain that trajectory, new thinking is needed to accelerate AI performance scaling to match to ever-expanding AI workload complexities.”

IBM, for example, pairs its current Power9 processor with Nvidia GPUs on the IBM AC922 server, which powers Summit, the world’s fastest supercomputer, which it recently launched at the US Department of Energy’s Oak Ridge National Laboratory. Researchers are applying AI and machine learning to genetic and biomedical datasets to assist with drug discovery and to better understand human health and diseases.

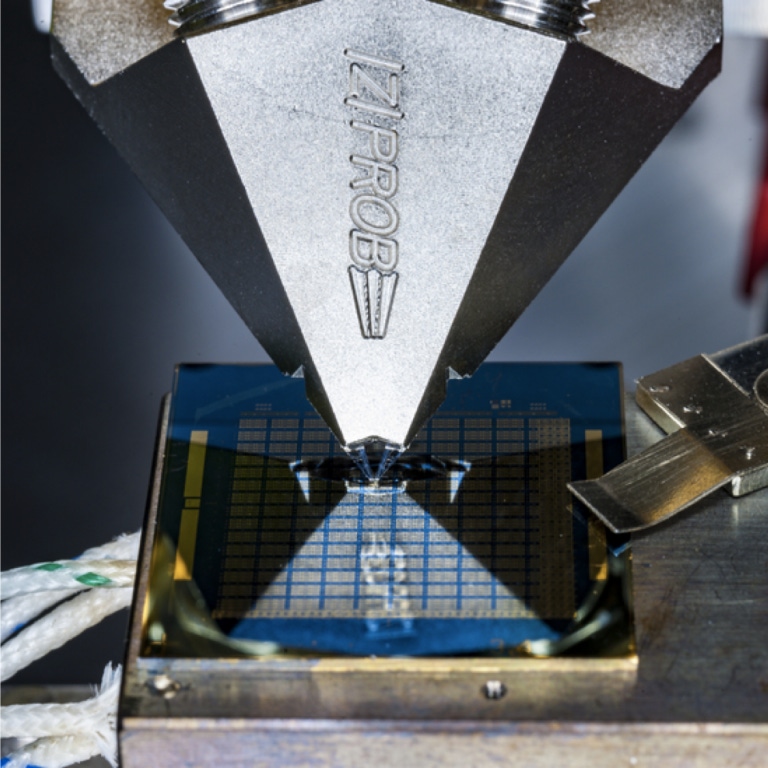

IBM’s next-generation AI processors will include the company’s Digital AI and Analog AI cores, which the company has been developing in recent years, Burns said.

Instead of using 32-bit or 16-bit precision, it has designed prototypes of Digital and Analog AI chips at 8-bit precision, which boosts the speed and improves energy efficiency without sacrificing accuracy, the company said in December.

At 8-bit precision, IBM’s Digital AI core achieves full accuracy while completing training two to four times faster than today’s existing systems, according to the company. Meanwhile, with 8-bit precision and in-memory computing with its Analog AI core, IBM doubles the accuracy of previous analog chips while consuming 33 times less energy than a digital architecture with the same precision, the company said.

“We have a number of AI prototypes in both the digital and analog space, and we are aggressively pursuing both at the center,” Burns said. “There will be more and more opportunities to further improve the hardware in future versions.”

The performance improvement makes Analog AI cores a perfect fit for edge applications, said Cindy Goldberg, program director at the AI Hardware Center.

“At IBM, we tend to obsess with data centers, servers, and the cloud, but when you have this type of a breakthrough in performance efficiency, you can deploy it much more broadly,” she said.

Investment in the AI Hardware Center benefits the AI hardware industry as a whole because IBM Research shares its findings, Rutten said. For example, during the development of its Digital and Analog AI cores, the company has regularly published its findings in scientific publications.

“The research they are focusing on in this center has been going on for several years, and it’s important research,” Rutten said. “It’s good news for the overall AI hardware industry, because as IBM gets more resources and partnerships and builds an ecosystem around their AI hardware research, everyone gets to benefit from it.”

Eventually, of course, IBM will productize its research and keep some of its innovations to itself, Rutten said.

“They are attempting to tackle big problems, and at some point, some secret sauce will not be available, but right now, it’s fairly open,” he said.

A $2 Billion Investment in New York

The center will be part of a $2 billion investment that IBM is making to increase its footprint at SUNY and throughout the state. As part of the announcement, IBM will invest at least $30 million for AI research across the SUNY system, with SUNY matching up to $25 million in funds.

Empire State Development, New York State’s economic development arm, will provide a five-year $300 million capital grant for SUNY to purchase and install tools needed for the AI Hardware Center.

IBM has had a research facility at SUNY Polytechnic Institute for close to 20 years, and some of the development work for the Power9 chip was conducted there, so it makes sense to make the campus headquarters for the future AI Hardware Center, Goldberg said.

“At IBM, we have invested so much into building that facility and the talent that’s there,” she said. “It’s the natural place for us to invest and continue to grow. It’s the perfect fit and part of our continuing and ongoing relationship with the state of New York and SUNY.”

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)