IBM Launches Storage Server for AI Workloads

ESS 3500 is up to 12% faster than previous generation systems.

May 25, 2022

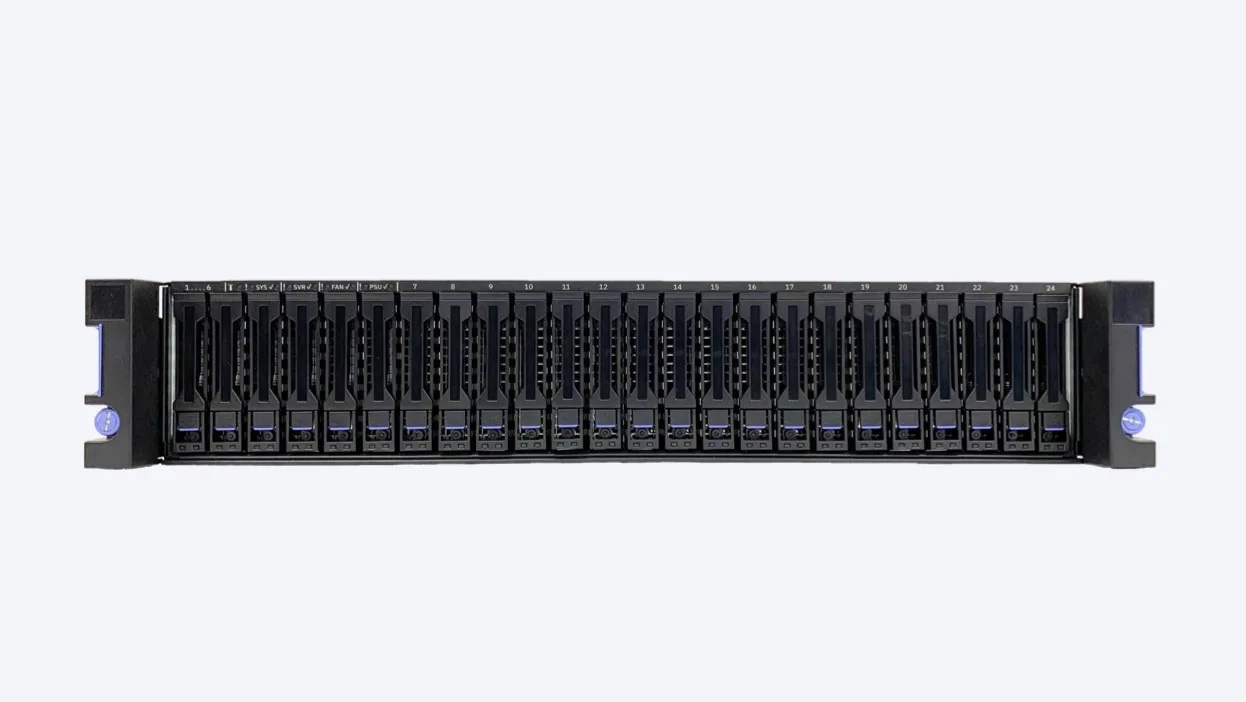

IBM has unveiled the Elastic Storage System 3500 – a 2U storage server designed specifically for AI training workloads.

The new unit houses 24 drive bays and has a maximum raw capacity of 368TB of NVMe storage.

Making use of Spectrum Scale, IBM’s high-performance clustered file system software, the ESS 3500 can achieve up to 91GB/s of throughput.

The system is designed to "break performance barriers" for AI – with increased throughput enabling GPUs to solve AI problems faster.

The new offering can be combined with other IBM storage units – including previous entries in the ESS line. It is also compatible with non-IBM storage. Given its improvements to GPU output, it could be paired with Nvidia DGX A100 systems – which are GPU-focused but lack storage options.

The solution comes with Spectrum Scale installed on a pre-configured system, with updates delivered at speed through containerized software.

ESS 3500 key specs

System features

Dual 1-socket Storage Controllers, Active/Active

1024 GB memory

De-Clustered RAID supporting erasure coding schemas: 3-way replication, 4-way replication, 4+2P, 4+3P, 8+2P, 8+3P

Performance

AMD 7642 48 core processor

Sequential read performance up to 91GB/S

Adapters

Four x16 PCIe Gen4 adapter slots

Drive support

12 or 24 NVMe SSDs (3.84TB, 7.68 TB or 15.36 TB)

Software

ESS software 6.1.3.0

IBM Spectrum Scale for ESS 5.1.3.1

Red Hat Enterprise Linux (RHEL) 8.4

Full specifications can be found here.

This story originally appeared on AI Business, a Data Center Knowledge sister publication. To stay up-to-date with artificial intelligence news, subscribe to the AI Business newsletter.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)