A Peek Inside Microsoft's Azure Cloud Ecosystem and Data Center Innovations

Steven Hill takes a virtual tour of Microsoft data centers. Here, he shares his takeaways from the presentation as well as his own digging into Azure data centers.

As a journalist and analyst for the past 20+ years (and as an unabashed rack monkey), I've always looked forward to data center tours as part of the bonus content you get for covering the IT industry. Pulling up floor tiles, gawking at the curious assemblage of infrastructure components, and letting your hair waft in the gentle breezes of a hot aisle bring home the physical realities of the data center that few get to experience. But a "virtual" experience … not so much.

The Microsoft Datacenter Tour: Virtual Experience event, hosted by Alistair Speirs, director of Global Infrastructure for the Microsoft Azure Business Group, that I attended on Aug. 10 was a much more sterilized affair. Absent were the noise, smells, and blinkenlights (NICHT FÜR DER GEFINGERPOKEN UND MITTENGRABEN!) that are part and parcel of every data center, only to be replaced by a 50-slide deck extolling the achievements and virtues of Microsoft's Azure cloud data center ecosystem.

Fair enough, it's the way of things today, and fortunately much of the nitty-gritty detail I feed upon is openly available for all to see at https://datacenters.microsoft.com/.

I know what it takes to produce a webinar for even simple IT topics, so putting together a one-hour program on the details of a data center ecosystem capable of supporting, operating, and expanding the Azure cloud environment means that a lot gets left out. That being said, I understand how they needed to break it down in order to cover the key segments, while leaving the rest to be found out at the website listed above by those of us who are driven to madness by the need to know ever more. And at the same time, looking to protect proprietary Microsoft technology that may or may not give them a technological or business advantage. It's a fine line to walk.

Microsoft Data Center Tour Highlights

In broad strokes, the Microsoft Datacenter Tour: Virtual Experience focused on key elements of the Azure ecosystem:

Cloud infrastructure: More than 200 data centers worldwide in 60+ data center regions with multiple availability zones.

Network connectivity: Based on a dedicated WAN leveraging 175,000 miles of fiber, spanning 190+ network PoPs, with regional network gateways offering 2.0ms latency for zones within a ~60-mile radius.

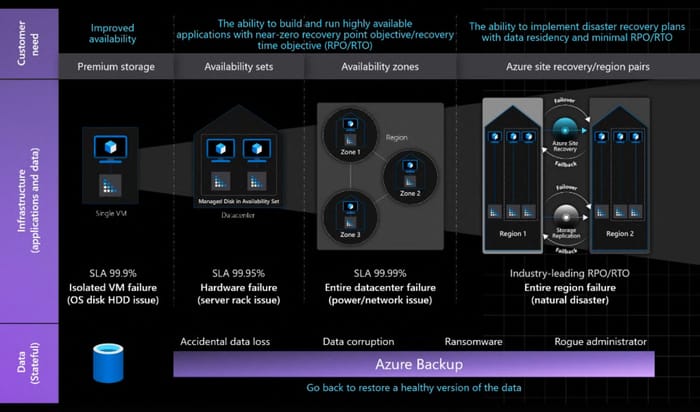

Availability zones: In regions currently supporting availability, mission-critical workloads can be spread across three or more fully isolated data centers. In addition, ideal placement can be determined by application latency requirements, with a baseline of 0.4ms for zones less than 40 km apart, and 1.2ms for those under 120 km apart. At the moment, there are 47 regions supporting 103 availability zones globally, and that is expected to increase to 63 regions and 161 availability zones in the near future.

Fully manageable edge to cloud coverage: Microsoft's Azure Arc platform provides a unified security, identity, and management platform that spans Azure Sphere and Azure IoT devices at the edge, on-prem options like Azure Stack Hub and Azure Private Edge Zones, all the way to Azure cloud Edge Zones and Regions.

Connectivity options: More than 190 PoPs, and options for ExpressRoute direct-to-WAN private links that support consistent latency, IPv6 workloads, and bandwidth up to 100GbE.

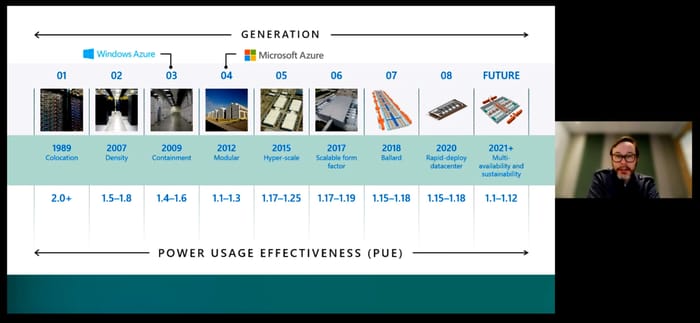

Tour host Alistair Speirs ran down key advancements in Microsoft data center design and power efficiency since 1989.

Granted, all these are important factors of a cloud ecosystem, but it wasn't until about halfway through the program that we finally got a glimpse of some data center infrastructure, and it was a pretty miniscule peek at best. Considering there are now over 200 Azure data centers, we can understand that the permutations are endless. Microsoft did mention that its typical Azure data center contains more than 1 million miles of fiber cable and the internal network offers Azure Firewall and distributed denial-of-service (DDoS) protection, and supports the SONiC Open Networking in the Cloud standard. But that was it. Nothing on servers, racking, storage, power, cooling … you know … a data center.

Fortunately, a little digging at https://datacenters.microsoft.com/ satisfied some of my curiosity beyond what was discussed in the tour. All of this is based on information found at the site above, so it's possible that there are a number of features I may have missed.

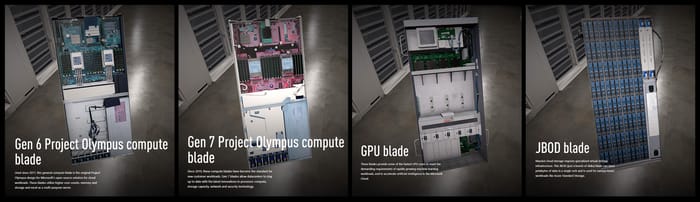

Server blades: Uniformly based on the OCP Olympus Gen 6 or Gen 7 design for Intel Xeon or AMD EPYC x64 CPUs, or Cavium ThunderX2 or Qualcomm Centriq ARM SOCs. With room for 16 DIMMs, these full-rack depth server blades offer 50GbE FPGAs NICs with offloading capabilities, plus room for up to eight PCIe NVMe drives, four SATA drives and a three-phase, battery-backed power supply.

GPU blades: Supporting Nvidia, AMD, and other GPU options.

Storage blades: Capacity of up to 88-disk JBOD (Just a Bunch of Disks) arrays.

Racks: 44 or 48 RU, 19-inch open frame with integrated three-phase, blind-mate PDU and adapters for 30A/50A 208V, 32A 400V, and 30A 415V services.

Emergency power: Diesel generators.

Cooling: Hot-aisle containment with optimized adiabatic cooling that combines ambient temperature and efficient evaporative cooling as required. The machine room pictured in the virtual tour was based on a slab, with overhead cable routing and doors enclosing the hot aisle.

The current versions of the Open Compute Project-based blade designs in use at Azure data centers for general compute, high-performance GPU, and storage applications.

Pretty basic stuff really, but it's nice to know. The real surprise was the 50GbE link to all of the servers, not to mention that they are based on field programmable gated arrays (FPGAs). This is clearly a nod to the data processing unit (DPU) approach to networking we've seen from other vendors, and though Microsoft doesn't call it that, the idea is to offload the mundane networking overhead to the network adapter itself to save CPU cycles.

When asked what infrastructure usually required the most upgrading, our host Alistair said that, aside from software, it was the networking that needed the most tweaking, an ability that was enabled by the use of FPGAs instead of traditional networking ASICs.

Azure's Focus on Security and Sustainability

Back to the tour, Alistair highlighted some of the other resilience and security features of the Azure cloud. With the increasing focus on personal privacy worldwide, an international service such as Azure risks substantial exposure if local and regional security issues aren't followed.

The Microsoft Azure model for achieving four nines of data and application resilience in the cloud.

To combat this, Azure covers more than 100 compliance-oriented offerings and is developing an EU-specific data boundary to meet General Data Protection Regulation (GDPR) regulations. Perhaps more to the point, Microsoft has a vision for Azure confidential computing, putting data completely in the customer's control and ensuring Azure has no access to the data.

Moving on through the tour, I was pleased to see the efforts that Microsoft has been taking to ensure its Azure cloud remains sustainable. This is a trend we've supported for years now, and it's great to see that Microsoft takes this very seriously, pledging to be zero waste and carbon-negative by 2030, and planning to remove all of its historical carbon since it was founded by 2050.

Also near and dear to our heart is its plan to be water-positive by 2030. Large parts of the world are in crisis for water availability, and data centers can consume billions of gallons of fresh water per year. I'm glad to see that trend reversed by a leading, international cloud provider. In addition, Microsoft offers tools for cloud sustainability and a dashboard for monitoring emissions.

Of course, no cloud program would be complete without a reference to AI, and with good reason. AI is a super hot topic, and it also happens to be one of the most compute-intensive applications in the IT world today. So the demand for cloud-based AI resources will likely be huge for years to come.

Microsoft seems to be taking a reasonable approach to AI and appears to offer a flexible range of services and GPU-based resources that can be scaled to meet the best combination of price, performance, and results for practically any customer use case. It's this type of dynamic environment that the cloud promised over a decade ago, and it democratizes the availability of extremely high-end resources for customers who couldn't dream of purchasing the high-end infrastructure needed for AI capabilities.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)