Cisco Unveils Modular, High-Capacity S-Series Storage for UCS

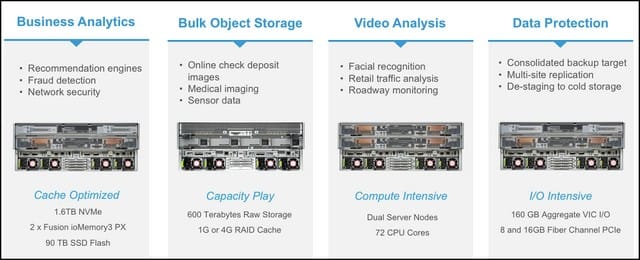

Making the case that public cloud-based storage loses its cost effectiveness after so long a period of time or so great a capacity of data, Cisco announced Tuesday the availability, beginning next week, of a new series of modular storage appliances geared for high-bandwidth applications, such as the emerging field of live, digital video analytics.

Called S-Series (but not to be confused with other classes of network product that the company has called “S-series” in the past), Cisco’s new design offers up to 600 TB of total storage capacity from 60 3.5-inch devices in 4U of space, and connectivity by way of Cisco’s Virtual Interface Cards, with up to 256 virtual adapters per node. Some of that space may be traded out for compute nodes.

“If you take all of the modules out of this system, it’s essentially an empty metal box,” admitted Todd Brannon, Cisco’s product manager for unified computing, in an interview with Data Center Knowledge. Think of it like a blade server, he said, except focusing on storage optimization as opposed to compute density.

“The modularity does two important things for customers: One is, we can dial the storage, compute, and caching up and down the I/O in different ways, right-sizing the infrastructure to the workload,” Brannon continued.

“Second, in a traditional server, it’s pretty much a fixed bill of goods. You buy it, and when you want to upgrade some component, you typically rip it out and replace it. Here, we can decouple all the refresh cycles of the server subsystems.”

For example, he said, once Cisco completes its company-wide transition from 40 Gbps to 100 Gbps connectivity per wire, all the data center operator needs to do is swap out the I/O modules, leaving storage and compute modules in-place. Similarly, when Intel upgrades its CPU technology, existing compute modules may be swapped out for newer ones. The management system, now provided by way of Cisco’s ONE Enterprise Cloud Suite, will immediately make adjustments.

Brannon noted that the component parts of modern servers each have different, usually non-synchronized, lifecycles from one another. The goal of modularity through disaggregation is to facilitate the management of resource classes on their own cycles.

In its own internal study, Cisco engineers modeled the costs of staging 420 TB of storage on Amazon’s S3 public cloud over a three-year period, and 600 TB of storage (accounting for overhead) of on-premises S-Series storage over the same period, accounting for labor and maintenance. While S3 incurs no up-front charges versus a significant investment for S-Series, Cisco believes enterprises will reach the break-even point in 13 months.

“The public cloud is fantastic for its immediacy, and the scaling that you can do there,” said Brannon. “We can offer the same type of rapid scaling on-prem for much, much lower costs.

“Customers are starting to recognize that, for these large data sets, the cloud’s not going to be the knight in shining armor. They’re still going to need to build these platforms on-prem. With S-Series, we’re trying to make that a lot easier for them, and give them the TCO that they need without all the operational headaches.”

But recognizing that customers will use public cloud storage for any number of purposes — including launching an application with the intent of moving it back on-premises — the UCS platform underlying S-Series will provide CliQr, giving them a means of migrating workloads on- and off-premises.

161101-Cisco-S-Series

S-Series will be made available as part of Cisco’s UCS 3260 platform, which can be configured to order or assembled around general use cases, beginning November 7.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)