Confluent Raises $24M to Commercialize LinkedIn-Developed Apache Kafka

Kafka tackled two problems: integrating disparate data sources and prepping it for real-time stream processing

July 8, 2015

Confluent closed a $24 million Series B to help it commercialize Apache Kafka, a real-time data streaming service. Founded by the creators of Kafka, Confluent was spun out of LinkedIn in order to commercialize the open source technology.

Kafka makes all types of data available for stream processing and is battle-tested. At LinkedIn, Kafka was used to handle over 800 billion messages across real-time messaging, alerting, and other services.

The developers open sourced the project at LinkedIn and donated it. Confluent founder and CEO Jay Kreps said it initially found its way to big Silicon Valley technology companies, eventually spreading over time to virtually every industry.

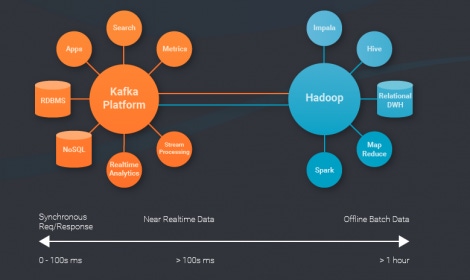

There’s a lot of talk of the next-generation data-driven business, a business capable of pulling in all sorts of data and acting on it instantly or near instantly. However, the “data fluid” business has a mess of integration woes to get over. Confluent believes Kafka is the answer. It can act as hub for the flood of disparate data.

“The problem we solve is the proliferation of systems and the resulting mess,” said Kreps. “Different types of data result in multiple point-to-point connected systems. We connect all these systems together. Anything you pump into Kafka is available for stream processing.”

It can be difficult to integrate your data in a single place for analysis, according to Kreps, let alone make it available for real-time processing. It was initially created to handle disparate systems, with the real-time processing part only a hypothesis in the early days.

“We had several different systems, log aggregation, old-fashioned messaging, etc. Each sucked in a different way: one would have no scalability or have weak data delivery guarantees. A lot of it was batch jobs.” Kafka was not only able to handle disparate databases, it was capable of handling real-time data at scale.

LinkedIn's needs are not limited to big social networks but mirror what’s happening in general. There are two trends that have caused Kafka to take off, according to Kreps.

“Companies are moving towards more diverse infrastructure setups," he said. "It’s no longer one size fits all, and they’re using data systems of different types, shuffling data back and forth."

"At the same time, there’s an embracing of ‘activity data’," said Kreps. "That's data that shows what’s happening in my business right now; sensor data device data coming from mobile, or the Industrial Internet of things. Web companies have this data – but it’s become increasingly universal.”

LinkedIn Netflix, Twitter, Uber and PayPal, Apple, and Salesforce all use Kafka, but its appeal isn’t limited to the big web-scale companies. Enterprises down to startups building unique real-time data functionality use it extensively. Confluent is a platform for the popular open source project.

Where Confluent adds value is it’s turning the open source project into a platform for diverse data. While Kreps said it's ready to go and has is already used in the biggest cases of mission critical data, it will continue to build out features around Kafka.

The round was led by Index Ventures with participation prior investor and leader of a $7 million Series A, Benchmark Ventures. The company is using the round to build out security and management features around the platform. The company will also extend the number of databases and sources it can plug into.

The company will invest in features like connectors into more databases and systems, security hardening and deeper management and monitoring tools, in addition to commercial support.

There’s a big Internet of Things play as well as an opportunity to act as stream data hub for an enterprise move to micro-services. The trend in both consumer and business world is diverse streams of data. Kafka also acts as a pipeline into Hadoop and works well with offline systems. Kreps compares it to the data warehouses of old tuned for modern data needs.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)