Arm’s New Cortex-R82 Chip Extends the Edge, Bringing Compute to Data

The microcontroller can run a full Linux OS and can offload workloads, even deep learning ones, from the server’s domain.

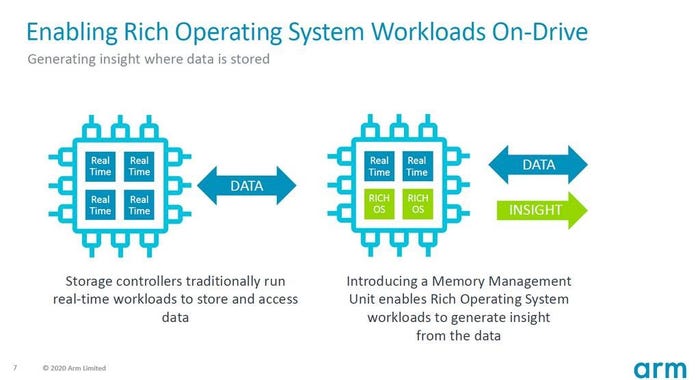

The most heard argument in recent months in favor of building smaller data centers towards the network edge has been this: Latency can be dramatically reduced if workloads are processed adjacent to where data is initially collected, especially from devices in the field. That makes sense, if you assume that workloads are stationary entities that “live” in data centers. In an announcement last week, processor architecture firm Arm Holdings flipped that argument on its ear, introducing a new Cortex-R82 design that aims to relocate data processing workloads from the servers that normally host them onto the drives that store the data being processed.

“When you have an interface with different types of storage with different kinds of data, it makes a lot of sense if you can move the workload so that it operates directly on the data that’s there,” Neil Werdmuller, Arm’s director of storage solutions, told Data Center Knowledge.

Suppose a surveillance camera system was being set up with machine learning routines to isolate and identify license plates, he said, offering an example. A typical data center network would have the camera data streamed to a remote server, incurring a potentially enormous connectivity cost. An edge computing solution would involve stationing smaller servers nearer the raw data, processing it there, then streaming only the refined results of the analysis to the main data center or nearby branch.

Werdmuller also proposed a third option: Put a smarter processor inside the storage device. Some of Arm’s usual storage partners have already tried this but with the firm’s Cortex-A designs, processors that were meant for use in servers and designed for higher power envelopes.

fulton arm graphic 090420

A processor based on Cortex-R82 on the other hand — of the class Arm originally intended for microcontrollers — can actually run a full-scale Linux OS, perhaps on a few reserved cores, using 64-bit RISC logic (previous Cortex-R iterations were 32-bit) and a power envelope Werdmuller promised would adhere to between 2 and 4 watts. Many applications might not even need mini- or micro-data centers on premises; they could actually run on devices small enough to fit under someone’s desk. “That is really just a mini-server,” he said.

Database architecture, even in this modern era, expects smart servers and dumb storage. The rise of NVMe over Fabrics using TCP/IP links speaks to the urgent need to leverage the speed of SSD storage to make data available to processors as though that storage was RAM. Running full-scale Linux directly on the drive, Werdmuller argued, condenses the physical structure of the network much further. Previously the control and management functions would be on the server, monitoring the drives remotely. Now they can be located on the drive itself. As a result the need for separate components becomes artificial.

If you’re a data systems engineer, you’ll understand this. Up to now a key difference between Cortex-A and Cortex-R classes was that the latter lacked a memory management unit (MMU). Up to now few thought it needed one. Cortex-R82 adds it back. So any customizations that an Arm partner (a producer of storage devices) may want to make to the functionality of its devices may now be executed in software.

“That reduces the risk of moving into something like computational storage,” said Werdmuller. “It allows you to create many products from a single controller.”

Up until last week’s announcement it was the lack of an MMU that distinguished a Cortex-R class from the general-purpose Cortex-A. In fact, Arm’s marketing went so far as to explain the MMU’s absence as a virtue. “In a system with an MMU,” reads a page produced for the Arm development community, “there are risks that might affect worst-case execution time.” One example this page noted was the need for a “translation table walk,” an iterative process of converting virtual memory addresses into physical ones, which is a necessary step when a Cortex-A handles a processor interrupt. For real-time operations such as storage device I/O, it’s a walk like this that could present unwanted hiccups and determinism issues.

“The Cortex-R family doesn’t have virtual memory, so there is never any translation table walking to worry about,” the Arm community page explained. For situations where critical memory contents may not have been cached, however, older designs such as Cortex-R52 and dating back to Cortex-R5 used tightly-coupled memory (TCM) “for highly deterministic or low-latency applications that may not respond well to caching.”

Werdmuller’s explanations implied that Cortex-R82 may have overcome both the latency and power costs associated with tasks such as self-managing storage — tasks previously tackled in big data architectures, for instance, by sophisticated, external cluster management frameworks like Nutanix’s Curator [PDF].

“Some of the early concepts were that all the drives would be self-managing,” he reminded us, “and they would do their own erasure coding and sharding across multiple drives, and you could just add more drives, and the system would build up. Now, at that time the capability wasn’t there, because running Linux on a drive really wasn’t practical, in terms of the power envelope. But I think now, with Cortex-R82, it does enable it. You can do your hard real-time and run Linux on there.”

In the pandemic era Arm’s release of a processor architecture is less indicative than it used to be of how soon customers will see parts delivered using processors and controllers based on Arm. Such data was not available for Cortex-R82 at press time. However, Werdmuller did confirm that the design and implementation of its reference architecture were conducted with a plurality of Arm’s typical partners. An Arm spokesperson permitted this much: “We do not have any public partners we can name, but can confirm that Cortex-R8 has been adopted by a number of leading storage vendors for their enterprise-grade products,” referring to a previous generation.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)