Google: From 112 Servers to a $5B-Plus Quarterly Data Center Bill

Hölzle reveals company’s largest server order ever: 1,680 servers in 1999

July 23, 2014

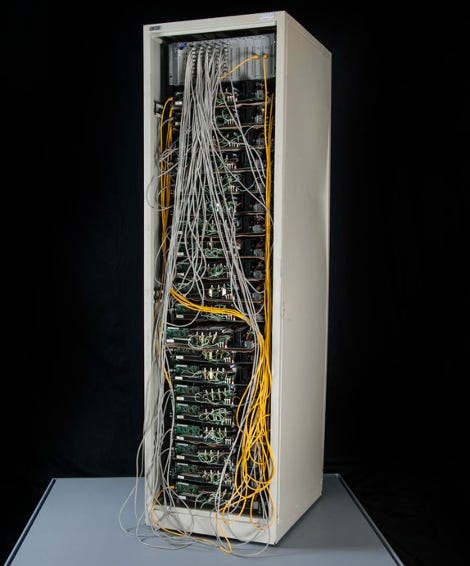

It was only 15 years ago that Google was running on slightly more than 100 servers, stacked in racks Sergey Brin and Larry Page put together themselves using cheap parts – including insulating corkboard pads – to cut down the cost of their search engine infrastructure.

That do-it-yourself ethos has stayed with the company to this day, albeit it is now applied at much bigger scale.

Google data centers cost the company more than $5 billion in the second quarter of 2014, according to its most recent quarterly earnings, reported earlier this month. The company spent $2.65 billion on data center construction, real estate purchases and production equipment, and somewhat south of $2.82 billion on running its massive infrastructure.

The $2.82 billion figure is the size of the “other cost of revenue” bucket the company includes its data center operational expenses in. The bucket includes other things, such as hardware inventory costs and amortization of assets Google inherits with acquisitions. The size of this bucket in the second quarter represented 18 percent of the company’s revenue for that quarter. It was 17 percent of revenue in the second quarter of last year.

Google’s largest server order ever

While the amount of money Google spends on infrastructure is astronomically higher than the amount it spent 15 years ago, the company makes much more money per server today than it did back then.

“In retrospect, the design of the [“corkboard”] racks wasn’t optimized for reliability and serviceability, but given that we only had two weeks to design them, and not much money to spend, things worked out fine,” Urs Hölzle, Google’s vice president for technical infrastructure, wrote in a Google Plus post today.

One of Google's early "corkboard" racks is now on display at the National Museum of American History in Washington, D.C.

In the post, Hölzle reminisced about the time Google placed its largest server order ever: 1,680 servers. This was in 1999, when the search engine was running on 112 machines.

Google agreed to pay about $110,000 for every 80 servers and offered the vendor a $2,000 bonus for each of the 80-node units delivered after the first 10 but before the deadline of August 20th. The order was dated July 23, giving the vendor less than one month to put together 800 computers (with racks and shared cooling and power) to Google’s specs before it could start winning some bonus cash.

Hölzle included a copy of the order for King Star Computer in Santa Clara, California. The order describes 21 cabinets, each containing 60 fans and two power supplies to power those fans.

There would be four servers per shelf, and those four servers would share:

400-watt ball bearing power supply

Power supply connector connecting the individual computers to the shared power supply

Two mounting brackets

One plastic board for hard disks

Power cable

Each server would consist of:

Supermicro motherboard

265 megabytes of memory

Intel Pentium II 400 CPU with Intel fan

Two IBM Deskstar 22-gigabyte hard disks

Intel 10/100 network card

Reset switch

Hard disk LED

Two IDE cables connecting the motherboard to the hard disk

7-foot Cat. 5 Ethernet cable

Google’s founders figured out from the company’s early days that the best way to scale cost-effectively would be to specify a simple server design themselves instead of buying off-the-shelf all-included gear. It designs its hardware on its own to this day. Other Internet giants that operate data centers at Google’s scale have followed suit.

Read more about:

Google AlphabetAbout the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)