SAN JOSE, Calif. - Google and Facebook may grab most of the data center headlines. But there's been plenty of innovation going on in Redmond. As it joins the Open Compute Project, Microsoft can now show the world the custom server and storage designs that power its global armada of more than 1 million servers.

This isn’t the first glimpse of Microsoft’s infrastructure. Data Center Knowledge has brought its readers tours of Microsoft data centers in Chicago, Dublin and Quincy, Washington, as well as the first look at its cloud server designs back in 2011.

But with its commitment to OCP, Microsoft will be contributing hardware specifications, design collateral (CAD and Gerber files), and system management source code for its cloud server designs. These specifications apply to the server fleet being deployed for Microsoft’s largest global cloud services, including Windows Azure, Bing, and Office 365.

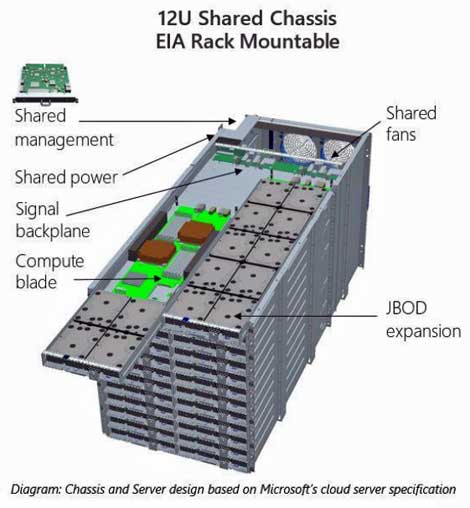

Microsoft’s cloud server architecture is based on a modular high-density chassis approach that enables efficient sharing of resources across multiple server nodes. A single 12U chassis can accommodate up to 24 server blades (either compute or storage), where two blades are populated in each 1U slot. Each compute blade features 10 Intel dual-core Xeon E5-2400 processors.

Up to 96 Servers Per Rack

A rack can hold three or four chassis depending on the rack height, which can be as tall as 52U (compared to an industry standard of 42U). That allows Microsoft to pack as many as 96 servers into a single rack.

Microsoft’s use of a blade chassis and half-width server and storage blades offers a different design approach than the current OCP offerings. Like Microsoft’s chassis, the OCP Open Rack provides centralized power supplies and fans, but it has a 21-inch wide equipment area, opposed to the standard 19-inch wide trays seen in most racks. Facebook and OCP use a three-wide server design, with processors and memory housed inside a 2U sled. Open Compute storage houses JBODs (Just a Bunch of Disks) on 1U sleds, while Microsoft packs its storage into its half-width blades.

The addition of Microsoft’s designs offers OCP hardware vendors the opportunity to design servers for Windows Azure clouds being run in enterprise data centers, as well as competing for contracts with Microsoft.

Here’s a closer look at additional details Microsoft has supplied about the servers and storage it is contributing to OCP.

Chassis-based shared design for cost and power efficiency

- Rack mountable 12U Chassis leverages existing industry standards

- Modular design for simplified solution assembly: mountable sidewalls, 1U trays, high efficiency commodity power supplies, large fans for efficient air movement, management card

- Up to 24 commodity servers per chassis (two servers side-by-side), option for JBOD storage expansion

- Optimized for mass contract manufacturing

- Estimated to save 10,000 tons of metal per one million servers manufactured

Blind-mated signal connectivity for servers

- Decoupled architecture for server node and chassis enabling simplified installation and repair

- Cable-free design, results in significantly fewer operator errors during servicing

- Up to 50% improvement in deployment and service times

Network and storage cabling via backplane architecture

- Passive PCB backplane for simplicity and signal integrity risk reduction

- Architectural flexibility for multiple network types such as 10Gbe/40Gbe, Copper/Optical

- One-time cable install during chassis assembly at factory

- No cable touch required during production operations and on-site support

- Expected to save 1,100 miles of cable for a deployment of one million servers

Secure and scalable systems management

- X86 SoC-based management card per chassis

- Multiple layers of security for hardware operations: TPM secure boot, SSL transport for commands, Role-based authentication via Active Directory domain

- REST API and CLI interfaces for scalable systems management