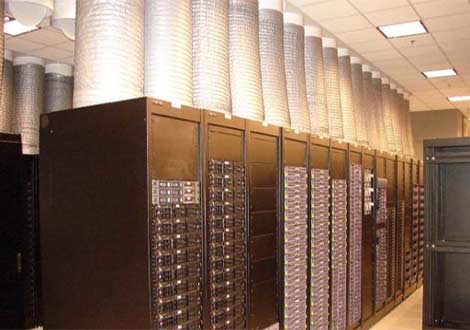

A look at the containment system in the Oracle data center in Austin, Texas.

Attend any data center industry event these days, and you're likely to hear multiple presentations mentioning the merits of variable speed airflow and isolating hot and cold air in the data center. But things were different back in 2004, when the data center team at Oracle Corp. confronted rising heat loads as the comapny expanded its Austin, Texas data center.

"There was no discussion of variable airflow, there was no discussion of containment," said Mukesh Khattar, Director of Energy at Oracle. "At the time it was hot aisle/cold aisle."

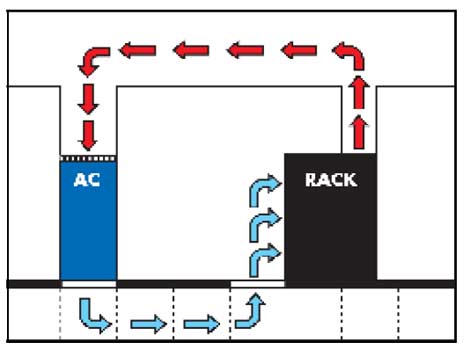

The Oracle team improvised a containment system that vented hot waste air from the top of the server rack into an enclosed ceiling plenum and directly back to the CRAC (computer room air conditioner) unit. Having isolated the air delivery and return system, Oracle worked to adjust the airflow speed, alowing them to reduce the power required to cool the system.

Recognized by ASHRAE

On Saturday, the Oracle team's work on the Austin facility was recognized with an award from ASHRAE, the industry group for heating and cooling professionals.

"This project debunked a lot of common myths associated with variable airflow in data centers and clearly demonstrated its cost effectiveness," Khattar said. "It has become well accepted. This has led to a swift market transformation."

When Oracle began its expansion of the Austin facility, it had 50,000 square feet of traditional raised-floor data center space, with each rack using about 4 kilowatts of power. The 32,000 square foot expansion would need to support power loads averaging 8 kilowatts per rack.

Airflow Reduction as a Key Goal

Oracle believed that its systems could be effectively cooled with about half the volume of air it was using in Austin, but only if it could gain greater control over the airflow. Containing the air would make it possible to use a lower rate of airflow from CRAC units, which would use less power.

"I wanted to see how we could slow down the fans," said Khattar. "The problem was that the boundaries between the hot and cold aisles were no longer working. The servers near the top of the rack were getting hotter air (from recirculation). The hot air was supposed to go back to the CRAC, but it was easy for the hot air to go back to the inlet. You needed to put a physical barrier between the hot air and cold air.

"We looked into hot aisle and cold aisle containment, and individual rack containment," he continued. "We had an internal debate, and people weren't ready for aisle containment. We decided on rack containment as a way to take the hot air from the rack into the plenum. We created a separate path for the cold air and the hot air, with no mixing."

An Enclosed Cooling Circuit

At the time, there were no commercial options for the type of containment system Oracle envisioned. "Putting in a total containment system took a lot of guts," said Mark Germagian, who consulted on the Oracle project and now runs cooling specialist OpenGate Data Systems. "It was totally new to do an entire cooling circuit using a common plenum. This was all custom engineered and custom designed."

And it wasn't an easy sell. "It took a lot of time to convince people," Khattar recalled. "Our IT department, our consulting engineers, our suppliers all had questions. This was not an easy process, and there was a substantial premium to do this."

Energy Savings: $1.2 Million a Year

But the design allowed Oracle to raise the supply air temperature for its servers from 58 degrees F to 68 degrees, and boost its chilled water temperature from 45 to 50 degrees F. The additional energy-saving design features - including CRACs with variable frequency drives (VFD), rack containment, plenum and supplemental fans - cost Oracle about $500,000. The annual energy savings worked out to 16 million kilowatt hours of power, or about $1.23 million annually - meaning the project paid for itself in less than five months.

"We didn't want to just do things the way we had been doing," said Khattar. "We were aware of the issues, and needed to address them. We knew if we just put in the VFD, there would have been issues with temperature. The containment approach allowed us to examine variable airflow. In order for me to put in the VFD, I had to put in containment."

In an industry in which companies are often reluctant to share innovation, the Oracle team decided to give public presentations about its design at the AFCOM Data Center World conference and ASHRAE events. "We learned a tremendous amount in the process," said Khattar. "We decided we needed to share."

A design of the airflow in the expansion of the Oracle Austin data center.

The Austin project team honored by ASHRAE included Oracle employees Mitch Martin, Keith Ward, Steve Metcalf, David Imel and, Mark Redmond, along with Khattar and Germagian.