You might never have thought about it this way, but an ordinary PC is already disaggregated. Its operating system is not embedded or otherwise etched into its firmware. Conversely, in a disaggregated network, the switches and routers are more like servers, with ordinary “merchant hardware” on the substrate and a replaceable, often open-source network operating system (NOS) as its software, most likely using Linux as its core.

On the surface, disaggregation seems like a sensible architectural trend for the network. “Decoupling” the software, as a developer would put it, enables its vendor (or, in the open-source case, its contributors) to adapt and improve it more frequently — far more often than its proprietary, EEPROM-based counterparts would get re-flashed.

Cumulus Networks coined the phrase “network disaggregation” in 2013 — an eon ago. Its entry into the market was the inflection point in the brewing of a perfect storm — effectively, an avalanche tipped off by a volcano. But is the enterprise data center network changing as a result, or is the impact of disaggregation limited to those organizations already possessing the skill to make sense of it?

Whose Network Is it Anyway?

Here’s the basic proposition: If the design of a network is mainly implemented in software, and hardware is mainly a facilitator for this software, then enterprises should become capable of organically growing networks that are more adaptive to their own workloads and scalable along with their own business needs. Disaggregation allows for the insertion of open-source development into the discussion of network engineering, making the design of enterprise networks a topic of general interest — outside of the locked doors of vendors like Cisco, Arista, and Aruba — for the very first time.

Such a discussion may be vitally necessary, especially now that enterprises’ networks are bigger than their data centers.

“Disaggregation is so that you can run [workloads] on metal that you own and on the cloud that you don’t,” says Jeff Baher, senior director for product and technical marketing at Dell EMC. “In the old days, you only got software that came with the switch, and there was never really a cloud to consider.”

“The way we think about it is, we’re reversing things to their natural order,” explains Ed Doe, chief business officer at programmable switch maker Barefoot Networks. In the old order of things, the functionality of the data plane was built directly into the switch. This determined whether that switch could forward packets using a protocol such as MPLS or VXLAN or whether it supported IPv6.

Image: Barefoot Networks

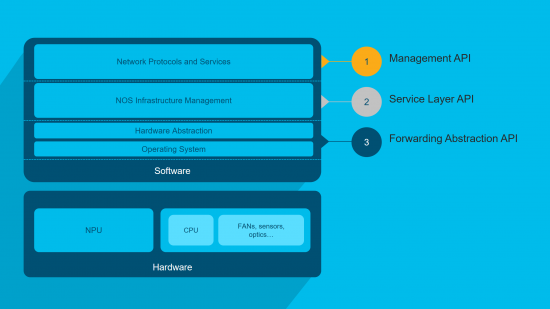

In the new order (illustrated above), the NOS instructs the data plane which protocols may be utilized, not vice versa. Barefoot sweetens its value proposition by offering a complete development environment based around the independently maintained language P4, a domain-specific language that specifies how a switch processes and forwards packets.

“You can incrementally add new capabilities to the data plane,” Doe says, “and have that be rolled out in tandem with the new updates to the operating system or the control plane. This allows people to evolve all this infrastructure. In essence, it’s not too dissimilar from having a cluster that is focused on storage or on machine learning. You can have these network capabilities only exist in parts of the network as well.”

Who’s Actually Doing This?

Doe paints a picture of organizations assuming command of their infrastructure the way they are taking charge of their applications, as evidenced by the rapid ascent of Kubernetes. He envisions data centers dropping updates into their networks at a CI/CD-like pace and foresees networks being simulated and modeled in safe staging environments, where thousands of individual containers represent network nodes in production environments. He cites a project called CrystalNet, where Microsoft constructed a simulated copy of its entire Azure network using its own SONiC open source switch operating system.

Maybe such projects are actually happening outside of the lab. Maybe.

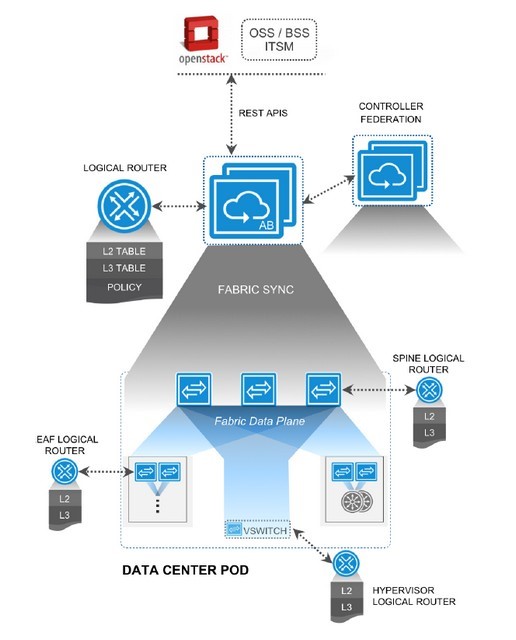

“We’re seeing more and more customers do that,” says Alan Hase, chief development officer at network fabric provider Big Switch Networks. One Big Switch customer he cites adopted its “pod network” architecture, where instead of traditional switches and routers, Big Switch maps logical routing (below) onto self-contained collections of compute, network, and storage resources. It’s academic to make those resources into Kubernetes clusters, he says.

Image: Big Switch Networks

The next step for that customer was to designate pod networks for testing in entirely virtual address spaces and pod networks for production. Such testing spaces may become necessary as enterprises realize that the network applications Kubernetes orchestrates are indeed networks unto themselves.

“We see a number of customers that use the public cloud as a development environment,” Hase says. “Because it’s elastic, when you’re developing a new set of applications, you may not know exactly how much server, storage, networking resources you need. Once they perfect it there, they move it on-prem into a Big Switch environment, because from a networking perspective, they have the same kinds of tools, multi-tenancy, and operational control that they had in the public cloud.”

“What we’re seeing now is very, very large networks, including network operations teams that are, at this point now, very comfortable developing their own software environments,” Dell EMC’s Baher says. He drew a profile of organizations with these large networks as … well, very large: hyperscale data centers, major telcos and service providers, and a select few large enterprises with an above-average interest in communications.

That’s the exact profile Barefoot’s Doe drew for us, citing two such large enterprises by name: Goldman Sachs and Fox Broadcasting.

The Reluctant Embracer

In March 2018, after years of being singled out as the target of all this disruption Cisco decided to adopt network disaggregation as an option, in a move it characterized at the time as an “embrace.” Speaking with us more recently, Cisco also invoked the term “embrace,” although carefully applying an asterisk … along with a dagger, a double-dagger, and a book full of endnotes.

“I think a majority of our customers want to consume networking innovation in an integrated-systems fashion,” says Sumeet Arora, Cisco’s senior VP and general manager of service provider network systems. “They’re focused on their business outcomes. This small subset are the ones who are willing to take on more. When you go into consuming innovation in a disaggregated fashion — software from one partner, hardware maybe from another partner — you have to take on more lifecycle management, more integration, and other technology aspects. Clearly … that requires them to invest in a specific skill set and specific capabilities, so they can manage that lifecycle.”

In other words, disaggregation is a trek into an unruly jungle, as Cisco perceives it, but if you’re brave enough to tame it — and have the cash on hand to support the training it will require — who is Cisco to say no?

Image: Cisco

In March 2018, Cisco began offering its IOS XR operating system on a disaggregated basis. In announcing this, Arora was careful to point out that most Cisco service provider customers prefer “360-degree partnerships” (Cisco’s version of “end-to-end solutions” in a world with multiple ends), but then said Cisco had essentially refactored IOS XR and other NOS (above) not just to enable disaggregated setups but also accessibility by means of APIs.

“To be honest, we are very sincere in our approach. We are definitely not doing this out of any pressure or any force,” Arora tells Data Center Knowledge. “Actually, we are very, very confident in every point of innovation in the networking stack, whether it’s the silicon, the optics, the network operating system, hardware, or the automation stack — whether they are all combined together or considered separately. We took the time to build our network operating system capability in a way that’s easier to consume separately, if needed.”

What’s a lucrative market to one vendor may be a “very small subset” to another. Disaggregated network hardware is a reality of this market, probably a permanent one. What is truly at issue here is whether there’s a valid case to be made for both disaggregated and aggregated architectures to continue to co-exist.