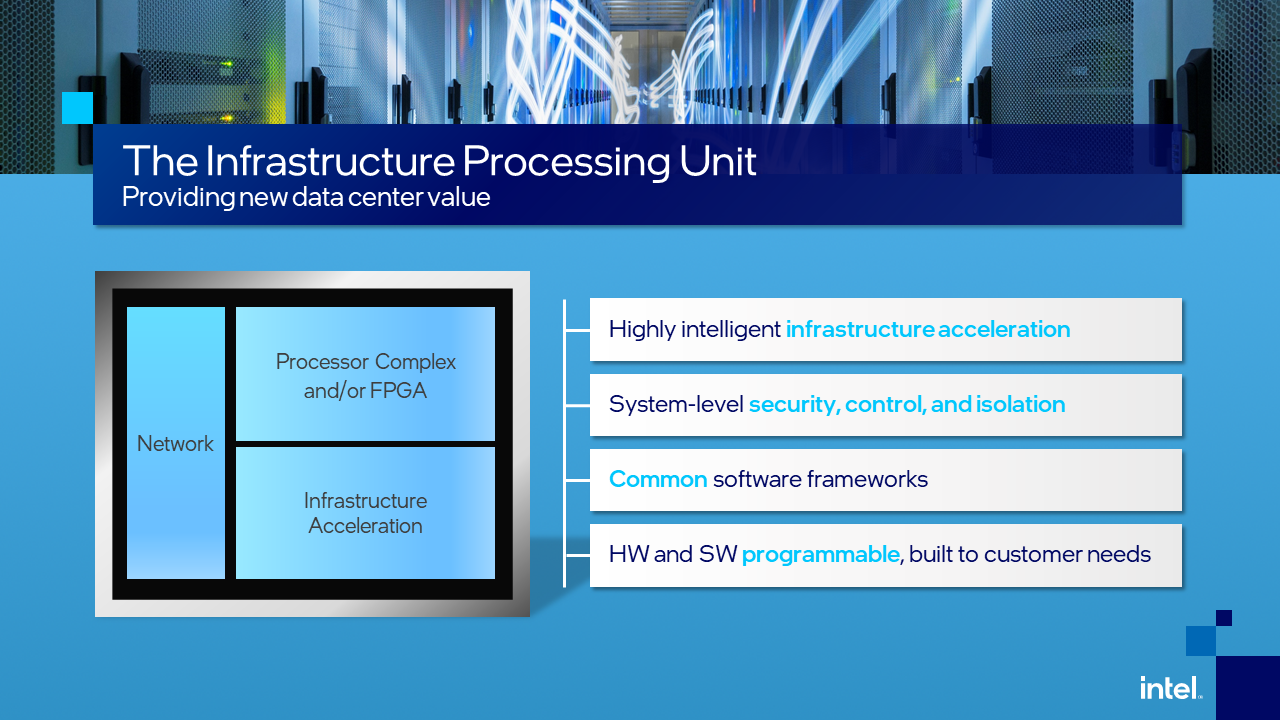

In the clearest confirmation to date that the “C” in “CPU” may soon no longer stand for “central,” Intel formally announced it has a future “vision” of server and network management functions being processed on a device outside the Xeon central processor. Calling such a device an Infrastructure Processing Unit (IPU), Intel now says this as-yet-unnamed component will enable cloud service operators to adopt a new, more flexible, virtualization model.

“It’s an evolution of our SmartNIC product line,” said Navin Shenoy, Intel’s Executive Vice President and General Manager for the Data Platforms Group. “When coupled with a Xeon microprocessor, it will deliver highly intelligent infrastructure acceleration. And it enables new levels of system security, control, isolation, to be delivered in a more predictable manner.”

Today’s move completes Intel’s pivot from its 2019 position on network processors, when it produced FPGA-based network interface cards (NIC) with attached programmability by way of an ASIC. Last October, amid Nvidia’s christening of Mellanox-based SmartNIC cards as “DPUs,” and Xilinx’ pending move toward elevating its SmartNICs to the role of co-processors (which took place last February), Intel formally dubbed its FPGA-based network cards “SmartNICs,” joining that trend, albeit a bit late.

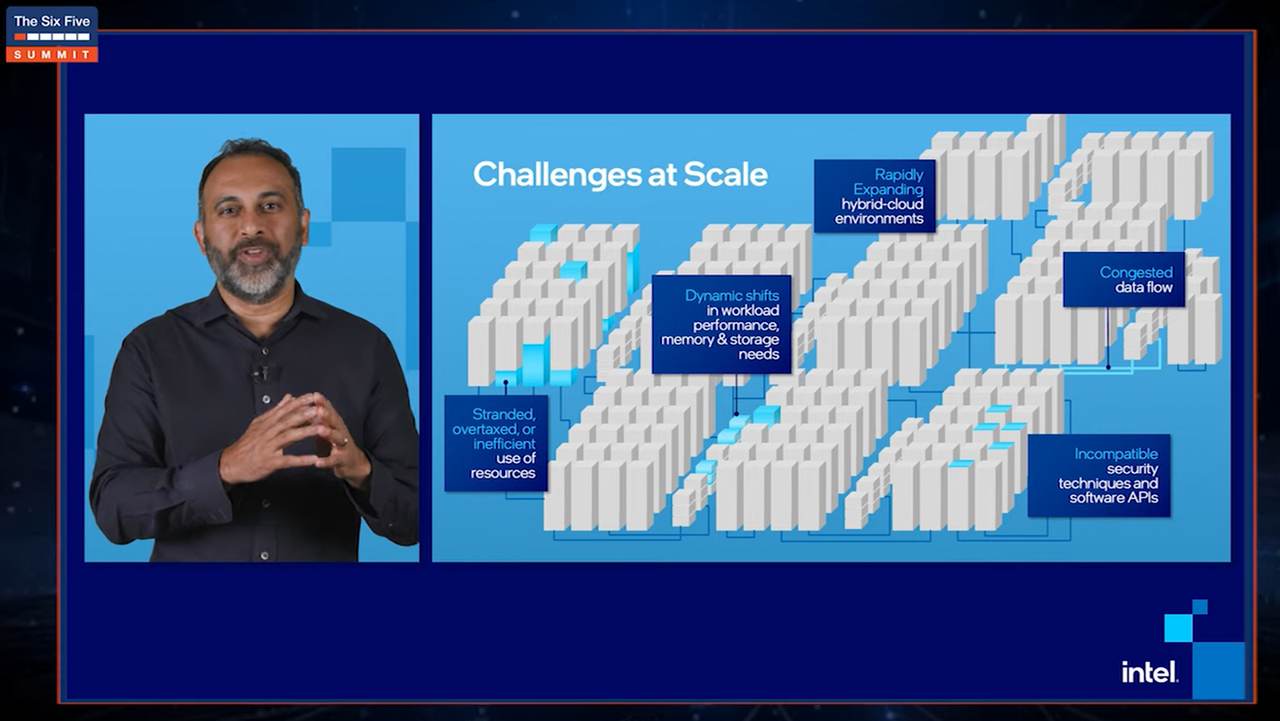

Now Intel is joining the “xPU” party, which signals a huge strategic shift for the company, now led by its legendary former CTO and former VMware CEO, Pat Gelsinger. Even in the throes of the pandemic, Intel’s executives and managers were defending the CPU as the principal deliverer of power and performance — while at the same time, paving a new, post-Moore’s Law route for the company. Acknowledging that an IPU can assume workloads from the CPU, means Intel is no longer afraid to say that the CPU can be overtaxed with system and network management workloads.

“By managing infrastructure in this manner,” Shenoy continued, “IPUs can scale to have direct access to memory and storage, and create just more efficient computing, right? Jevons Paradox applies.”

That refers to an observation made by the 19th century British economist William Jevons — namely that increasing the efficiency of an energy source tends to trigger an increase in its own consumption. It’s a bit older and dustier than Moore’s Law, but it may have to serve fill-in duty until someone produces a more modern theory for Intel’s economies of scale.

“This portfolio is built around understanding the constraints that rising demand provides, and then addressing them and knocking them off,” remarked Lisa Spelman, Intel’s vice president and general manager for Xeon products, in a recent interview with Data Center Knowledge.

“Our SmartNICs help you deal with infrastructure management,” Spelman continued, “inside of the node or the rack. And then some of the work that we’re doing with CPU and XPU leads towards optionality of disaggregated acceleration: multiple nodes working as a system put together.”

Temptingly omitted from Intel’s official announcement Monday is any use of the word “server” outside from an historical context. This leaves the door wide open for Intel to not only adopt, but collaborate in the production of, disaggregated components. As Spelman described them, these would be independent clusters of pooled resources, probably designed for rack-scale architectures.

“It used to be that all compute was done on CPUs. That’s clearly changing,” remarked Guido Appenzeller, Intel’s recently appointed CTO for its Data Platforms Group, in an interview with Data Center Knowledge. “We’re seeing GPUs emerging, AI accelerators, the IPU. We’re seeing more diversity, more heterogeneity. That’s definitely a trend, and one that we are embracing.

![DataCenter_of_theFuture_slide [1200 px].jpg](/sites/datacenterknowledge.com/files/DataCenter_of_theFuture_slide%201200%20px.jpg)

“If you look forward at the data center of the future,” Appenzeller continued, “the classic server model… is too rigid. This idea that a CPU needs to sit between any accelerator — that an accelerator needs to be connected to the network via CPU, then networking card, then fabric — I think that no longer holds. In the future, it’s going to get a lot more complicated. We’re seeing a couple of flavors of that. We see an infrastructure processing unit that sits in front of these accelerators of storage. And in the AI space, we’re seeing private fabrics, where you have a couple of accelerator cards that have their own fabric, because they need to move such large amounts of memory at super-low latencies.”

As hyperscale servers transition into platforms that support communications centers, latencies become both more measurable and more pronounced. The dependability of a CPU for providing enterprise-class workloads may occasionally be measured in seconds, but once the tools being used start measuring in milliseconds, you know you’re dealing with the constraints of a communications network.

And these constraints may already have become too rigid and demanding for a processor design that, after all, is only one step up from the ISA bus created for the IBM Personal Computer. The dream of many server makers, which some are willing to say aloud, is for memory to become addressable by means of a fast network fabric that would replace the system bus.

It won’t make much sense for the CPU — today, the arbiter of the system bus — to shift its management responsibilities to the network fabric. Embracing the role of an infrastructure processor, whether you use Intel’s letter for it or Nvidia’s, may be the biggest step yet towards networking DRAM into rack-scale boxes. That could break the hyperscale server market wide open — so wide that the individual server would no longer be the ruling form factor.

“We’ve already deployed IPUs using FPGAs,” remarked Navin Shenoy during Monday’s announcement, “in very high volume at Microsoft Azure. And we recently announced partnerships with Baidu, JD Cloud, and VMware as well.”

Shenoy delivered his announcement as part of a Six Five Summit session entitled, “Why You Have Been Thinking About the Future of the Data Center All Wrong.” The “You,” in this context, is presumably yourself, who are being blamed for having perceived servers merely as boxes with service busses and network interfaces for all these years. (Shame, shame on you, for letting yourself be misled by… well, by Intel.)

The text of Intel’s formal announcement categorizes IPUs as infrastructure accelerators alongside XPUs (capital “X”), which it classifies as “for application-specific or workload-specific acceleration.” Intel acquired FPGA accelerator maker Altera in 2015, and gave its FPGAs a seat on the front row, during its 3rd-gen Xeon rollout last April.

Intel has also been hinting about a probable upgrade to the role of its Optane solid-state memory units. Grouped together with CPU, XPU, and now IPU, the company clearly looks to be assembling a “Xeon platform,” probably with its own logo program. One can foresee conventional servers as well as future Xeon-based, disaggregated, rack-scale service appliances as carrying an upscaled Intel certification logo, perhaps with a slogan such as “Xeon-driven.”

Such a marketing program could put Intel back on a one-to-one level against fast-developing competition from Arm processor makers such as Ampere, giving high-volume customers such as Microsoft Azure clearer, more familiar, and perhaps less anxiety-inducing, choices. Hopefully by that time, those customers won’t be thinking of the future of the data center all wrong.

On Monday, Intel immediately began branding its existing SmartNIC product line, as well as partner-built SmartNICs with Intel Stratix FPGA processors built-in, as “Infrastructure Processing Units from Intel.” The existing Silicom N5010 quad-port 100-gigabit network adapter, for example, now appears on Intel’s Web site, listed as an “IPU adapter.”