Hyperscale data center operators have a reputation for doing things differently than more traditional enterprises. In most cases, it’s the unprecedented scale of their infrastructure that pushes them to innovate. At some point, each of them reached a size when solutions already on the market either don’t work or are prohibitively expensive.

In that world, even the simplest and most mundane functions require automation. At Alphabet’s Google, arguably the world’s first hyperscaler, one of the latest data center operations functions to get automated is the destruction of defunct hard drives.

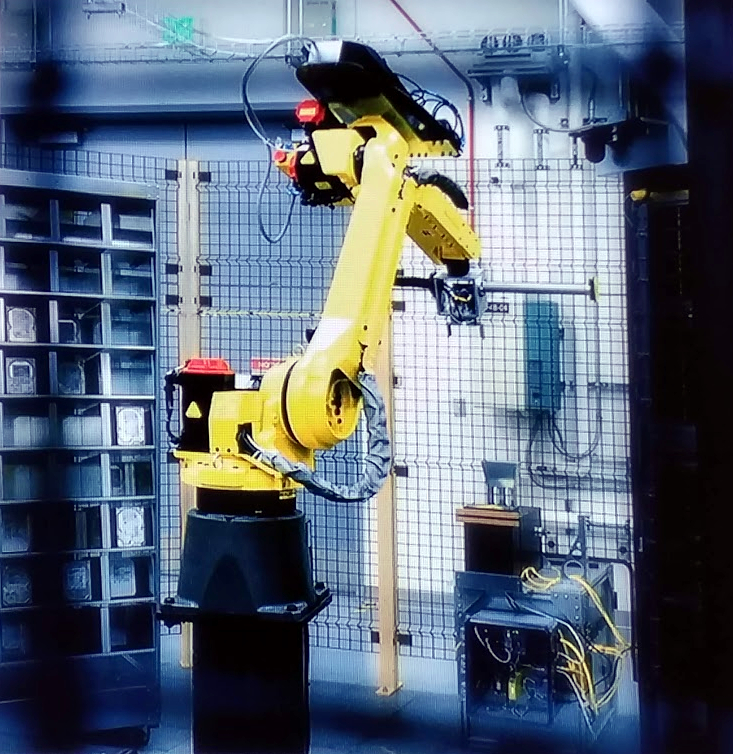

Unlike the other, more consequential function the company recently automated – real-time tuning of its data centers’ cooling systems for efficiency – disk destruction doesn’t require machine learning. Google engineers simply looked at a number of existing industrial robots used at manufacturing plants, found the best fit, and adopted it for hard-drive annihilation.

“That’s an industrial robot,” Joe Kava, Google’s VP of data centers, told Data Center Knowledge in an interview. They’re the same robots you might see at an automotive plant, he explained. “They’re just high-throughput.”

Decommissioning hard drives may sound mundane, but it’s one of the most important functions of a data center operator, especially one that handles sensitive data of billions of people, businesses, and governments around the world. Simply wiping a disk or an SSD before it’s discarded is not enough. A savvy hacker can sometimes recover some of the data it used to store.

Google first detailed its process for this back in 2011. A company-produced video showed wiped drives get punctured with a steel piston and then thrown into an industrial shredder. The tiny pieces of plastic and metal then got boxed and recycled.

What happens to each drive being replaced in the company’s data centers today is still the same. What’s different is who’s doing it. It’s now done by robots in what Google calls a “fully-automated disk-erase environment,” Kava said.

A Google data center engineer manually throwing a hard drive into a shredder

Not only does automation increase throughput, it also helps with chain of custody, or tracking everyone who’s handled a drive from the moment it’s installed in the data center and the moment it’s turned into a pile of shavings. The fewer people touch the servers and the drives, the shorter and easier to manage that chain of custody is, he explained.

There isn’t a constant stream of drives that need to be destroyed in Google data centers. Drive failures are a normal part of day-to-day operations, but they don’t fail and need to be shredded so often that human technicians cannot keep up.

Besides, the company only shreds drives that “can’t be verified as 100 percent wiped,” Kava said. (It sells the verifiably clean ones to companies that give them a second life.)

When the robots really come in handy is during big wholesale hardware upgrades. “When we really need the big throughput is when we’re saying OK, we’re going to go and retrofit our entire fleet of two-terabyte drives, we’re going to take those out … and we’re going to make 10-terabyte drives the standard, or whatever,” he said. “And that’s when the robotics are very useful.”

Photo of a robot destroying hard drives in a Google data center Joe Kava, the company's VP of data centers, showed during a presentation at Google Cloud Next 2018

In July, speaking from stage at the Google Cloud Next conference in San Francisco, Kava said Google has automated many processes that have to do with infrastructure but not most of the day-to-day data center operations.

Some things, like inventory management, supply-chain fulfillment, and server assembly, lend themselves well to using robotics. Those processes are heavily automated at Google.

The company also has “a lot of automated scripting that helps direct our [data center] technicians,” letting them know what’s wrong with a particular server, for example, before they service it,” Kava said.

At least today, robots don’t have the kind of dexterity required to pull out a server, disconnect the cables, and so on. Perhaps that could change in the future, he said, but “today, the vast majority of it is still run by people.”