If you’re running a cloud platform at Microsoft’s scale (30-plus regions around the world, with multiple data centers per region) and you want it to run on the best data center hardware, you typically design it yourself. But what if there are ideas out there -- outside of your company’s bubble -- that can make it even better? One way to find them is to pop your head out as much as possible, but which way do you look once you do? There’s another way to do it, and it’s proven to be quite effective in software. We’re talking about open source of course, and Microsoft -- a company whose relationship with open source software has been complicated at best -- has emerged as a pioneer in applying the open source software ethos to hardware design.

Facebook, which has been a massive force in open source software, became a trail blazer in open source data center hardware in 2011 by founding the Open Compute Project and using it to contribute some of its custom hardware and data center infrastructure designs to the public domain. But there’s been a key difference between Facebook’s move and the way things work in open source software. The social network has been open sourcing completed designs, not trying to crowdsource engineering muscle to improve its infrastructure. Late last year, Microsoft, an OCP member since 2014, took things further.

In November, the company submitted to the project a server design that at the time was only about 50 percent complete, asking the community to contribute as it takes it to 100 percent. Project Olympus (that’s what this future cloud server is called) is not an experiment. It is the next-generation hardware that will go into Microsoft data centers to power everything from Azure and Office 365 to Xbox and Bing, and it’s being designed in a way that will allow the company to easily configure it to do a variety of things: compute, data storage, or networking.

Learn about Project Olympus directly from the man leading the charge next month at Data Center World, where Kushagra Vaid, Microsoft’s general manager for Azure Cloud Hardware Infrastructure, will be giving a keynote titled Open Source Hardware Development at Cloud Speed.

Learn about Project Olympus directly from the man leading the charge next month at Data Center World, where Kushagra Vaid, Microsoft’s general manager for Azure Cloud Hardware Infrastructure, will be giving a keynote titled Open Source Hardware Development at Cloud Speed.

There’s a link between the design’s modularity aspect and the open source approach. Microsoft wants to be able to not only configure the platform for different purposes by tweaking the usual knobs, such as storage capacity, memory, or CPU muscle, but also to have a single platform that can be powered by completely different processor architectures -- x86 and ARM -- and it wants to have multiple suppliers for each -- Intel and AMD for x86 chips, Qualcomm and Cavium (and potentially others) for ARM. The sooner all the component vendors get involved in the platform design process, the faster a platform will emerge that’s compatible with their products, goes the thinking.

Read more: Why Microsoft Says ARM Chips Can Replace Half of Its Data Center Muscle

“Instead of having a team do everything in-house, we can work with these folks in the ecosystem and then bring the solutions to market faster, which is where differentiation is,” Kushagra Vaid, general manager for Azure cloud hardware infrastructure, said in an interview with Data Center Knowledge. “So, it’s been a completely different development world from that standpoint versus what we used to do.”

Making Data Centers Cheaper to Operate

Microsoft has already seen the benefits of going the Open Compute route with its current- and previous-generation hardware developed in collaboration with suppliers. Project Olympus widens the pool of potential engineering brainpower it can tap into. About 90 percent of the hardware it buys today is OCP-compliant, meaning it fits the basic standard parameters established by the project, such as chassis form factors, power distribution, and other specs. It hasn’t always been this way.

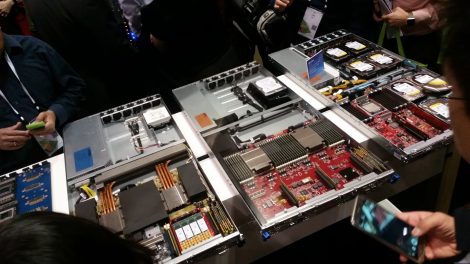

Microsoft's Project Olympus servers on display at the Open Compute Summit in Santa Clara in March 2017 (Photo: Yevgeniy Sverdlik)

The company started transitioning to a uniform data center hardware strategy for its various product groups around the same time it joined OCP. The goal was to reduce cost by limiting the variety of hardware the groups’ infrastructure leads were allowed to use. And Microsoft decided it would make that hardware adhere to OCP standards. So far, Vaid likes the results he’s seen.

Standardization has helped the company reduce hardware maintenance and support costs and recover from data center outages faster. A Microsoft data center technician no longer has to be acquainted with 10 to 20 different types of hardware before they can start changing hard drives or debugging systems on the data center floor. “It’s the same hardware, same motherboards, same chassis, same racks, same management system, it’s so much simpler,” Vaid said. “They spend less time to get the servicing done. It means fewer man hours, faster recovery times.”

One Power Distribution Design, Anywhere in the World

Project Olympus is the next step in Microsoft’s pursuit of a uniform data center infrastructure. One of the design’s key aspects is universal power distribution, which allows servers to be deployed in colocation data centers anywhere in the world, regardless of the local power specs, be they 30 Amp or 50 Amp, 380V, 415V, or 480V. The same type of rack with the same type of power distribution unit can be shipped for installation in Ashburn, London, Mumbai, or Beijing. The only thing that’s different for each region is the power adaptor.

This helps Microsoft get its global hardware supply chain in tune with data center capacity planning, and few things have more bearing on the overall cost of running a hyper-scale cloud – and, as a function of cost, end-user pricing -- than good capacity planning. The less time passes between the moment a server is manufactured and the moment it starts running customer workloads in a data center, the less capital is stranded, which is why it’s nice to have the ability to deliver capacity just in time, when it’s needed, as opposed to far in advance to avoid unexpected shortages. “Essentially we can build a rack one time, and after it’s built we can very late in the process decide which data center to send it to, without having to worry about disassembling it, putting in a new PDU,” Vaid said. “It all delays the deployment.”

Taking Cost Control Away from Vendors

If the Project Olympus design was 50 percent done in November, today it’s about 80 percent complete. Vaid wouldn’t say when Microsoft is expecting to start deploying the platform in production. The company still maintains a degree of secrecy around timing of deployment of its various technologies.

He also had no comment on the report that surfaced earlier this month saying Hewlett Packard Enterprise was expecting Microsoft to cut back on the amount of data center hardware it would buy from the IT giant. The report was based on anonymous sources, and Vaid pointed to the fact that HPE was listed as one of the solution providers involved in Project Olympus.

HPE of course was one of several on the list, along with Dell, ZT Systems, Taiwanese manufacturers Wiwynn and Quanta, and China’s Inspur. That’s one of the biggest reasons behind OCP to begin with. The hyper-scale data center users drive the design, while the suppliers compete for their massive hardware orders, leaving little room for suppliers to differentiate other than on price and the time it takes to deliver the goods, flipping the world upside down for companies like HPE and Dell, who until not too long ago were enjoying market dominance. “We have full control of the cost,” Vaid said. “We can take what we want, we can take out what we don’t want, and the solution providers are just doing the integration and shipping.”