One reason a service like Facebook grows and maintains popularity is by ensuring high performance for the users. If it took too long to upload or view photos on Facebook, for example, the social network would probably not be growing as quickly as it has been.

Optimizing everything for performance is a costly exercise, however, and at Facebook’s scale, it is an extremely costly exercise. The good news is, at that scale, even a small infrastructure efficiency improvement can translate in millions of dollars saved.

The company’s infrastructure team, including software, hardware, and data center engineers, spend a lot of their time thinking about where that next degree of efficiency is going to come from. Early last year, the product of one such project came to life.

Two Facebook data centers designed and built specifically to store copies of all user photos and videos started serving production traffic. Because they were optimized from the ground up to act as “cold storage” data centers for a very specific function, Facebook was able to substantially reduce its data center energy consumption and use less expensive equipment for storage.

This week, the Facebook team that designed these cold storage facilities in Prineville, Oregon, and Forest City, North Carolina, shared details about the design and the savings they were able to achieve.

Data Center Design Optimized for Single Purpose

The way Facebook ensures user content is always available and retrieved quickly is by storing lots and lots of copies of every media file in its data centers. Copies of all those files are stored in the primary Facebook data centers and in the cold storage facilities.

A newer, more frequently accessed file, however, gets more copies in the “hot” data centers than older files that are not in as much demand. Main function of the cold storage sites is to make sure a file is always available for retrieval regardless of how many copies of it are stored in a hot data center, Kestutis Patiejunas, a Facebook software engineer who’s been involved in the cold storage project from day one, explained.

Because they store replicated files, the cold storage facilities could be built without any redundant electrical infrastructure or backup generators that are traditionally present in data centers. That’s one way the team was able to cut cost.

The cold storage systems themselves are a modified version of the Open Vault, a Facebook storage design open sourced through its Open Compute Project initiative. The biggest and most consequential modification was making it so that only one hard drive in a tray was powered on at a time.

A modified Open Rack, the cold storage rack has fewer power supplies, fans, and bus bars (Photo: Facebook)

The storage server can power up without sending power to any of the drives. Custom software controls which drive powers on when it is needed.

This way, a cold storage facility only needs to supply enough power for six percent of all the drives it houses. Overall, the system needs one-quarter of the power traditional storage servers need.

This allowed the team to further strip down the design. Instead of three power shelves in the Open Rack, a cold storage rack has only one, and there are five power supplies per shelf instead of seven. The number of Open Rack bus bars was reduced from three to one.

Because all hard drives are never powered on at the same time, the number of fans per storage node was reduced from six to four.

10+10=14

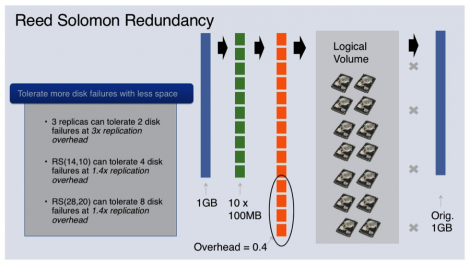

In addition to optimizing the hardware architecture, Patiejunas and his colleagues used a technique called Reed-Solomon error correction to reduce the amount of data storage capacity needed to store copies. The technique basically allows a user to store less than a full redundant copy of a file in a different location but still be able to recover it in full if one of the locations becomes unavailable.

If, for example, a file is split into 10 parts, the amount of parts needed to store it across two locations would be 14 instead of the full 20.

Here’s a visual, courtesy of Facebook:

What’s Next?

While the cold storage setup is working well, and both data centers have lots of room to accommodate more data, Patiejunas and his team are already thinking about their next move. Besides adding another cold storage facility elsewhere in the U.S., one of the next steps would be to do cold storage replication across multiple data centers.

Today, data from a hot data center on the West Coast is backed up in a cold storage site on the East Coast and vice versa. The next step would be to apply the Reed-Solomon technique across multiple geographically remote cold storage sites, Patiejunas said.

When there is a third cold storage site, the topology would be virtually infallible. If data is spread across three data centers, a file will be available even in an entire site goes down completely. The chances that two out of three data centers will go down are very slim, he said.