NEW ORLEANS - Let's face it, supercomputers are cool. In a culture that’s impressed by big numbers, they serve as poster children for our technological prowess, crunching data with mind-blowing speed and volume. These awesome machines seem to have little in common with the laptops and tablets we use for our everyday computing.

In reality, say leaders of the high performance computing (HPC) community, supercomputers touch our daily lives in a wide variety of ways. The scientific advances enabled by these machines transform the way Americans receive everything from weather reports to medical testing and pharmaceuticals.

“HPC has never been more important,” said Trish Damkroger, a leading researcher at Lawrence Livermore National Laboratory and the chair of last week’s SC14 conference, which brought together more than 10,000 computing professionals in New Orleans. The conference theme of “HPC Matters” reflects a growing effort to draw connections between the HPC community and the fruits of its labor.

“When we talk at these conferences, we tend to talk to ourselves,” said Wilf Pinfold, director of research and advanced technology development at Intel Federal. “We don’t do a good job communicating the importance of what we do to a broader community. There isn’t anything we use in modern society that isn’t influenced by these machines and what they do.”

Funding Challenges, Growing Competition

Getting that message across is more important than ever, as America’s HPC community faces funding challenges and growing competition from China and Japan, which have each created supercomputers that trumped America’s best to gain the top spot in the Top 500 ranking of the world’s most powerful supercomputers. The latest Top 500 list, released at the SC14 event, is once again topped by China’s Tianhe-2 (Milky Way) supercomputer, followed the Titan machine at the U.S. Department of Energy’s Oak Ridge Laboratory.

The DOE just announced funding to build two powerful new supercomputers that should surpass Tianhe-2 and compete for the Top 500 crown by 2017. The new systems, to be based at Livermore Labs in California and Oak Ridge National Laboratory in Tennessee, will have peak performance in excess of 100 petaflops, and will move data at more than 17 petabytes a second - the equivalent to moving over 100 billion photos on Facebook in a second. The systems will use IBM POWER chips, NVIDIA Tesla GPU accelerators and a high-speed Mellanox InfiniBand networking system.

These projects mark the next phase in the American HPC community’s goal of deploying an “exascale” supercomputer, which can compute at 1,000 petaflops per second, as opposed to the 33 petaflops per second achieved by Tianhe-2. The latest roadmap envisions a prototype of an exascale system by 2022.

Winds of Change in Washington

That type of rapid advance will require significant investment. Nearly three quarters of HPC professionals say it continues to be difficult for HPC sites to obtain funding for compute infrastructure without demonstrating a clear return on investment, according to a new survey from Data Direct Networks.

The largest funder of American supercomputing has been the U.S. government, which is in a transition with implications for HPC funding. Many of the projects showcased last week at SC14 focus on scientific research, including research areas that may clash with the political agenda of the Republican Party, which gained control of both the House and Senate in elections earlier this month.

A case in point: Climate change has been a focal point for HPC-powered data analysis, such as a new climate model unveiled last week by NASA. As noted by ComputerWorld, Sen. Ted Cruz of Texas is rumored to be in line to chair the Senate Subcommittee on Science and Space committee, which oversees NASA and the National Science Foundation. Cruz is a climate change skeptic who does not believe global warming is supported by data.

But there are many areas of supercomputing research that figure to be candidates for bipartisan support. An example was provided by Dona Crawford, associate director of computation at Lawrence Livermore Labs, one of three Department of Energy facilities focused on applying supercomputing for security research.

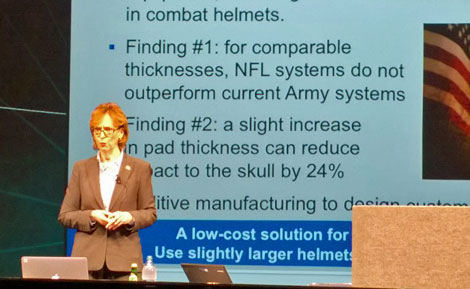

Dona Crawford, the Associate Director for Computation at Lawrence Livermore National Laboratory, discusses research on the safety of helmet technology for U.S. soldiers during a presentation at the SC14 conference . (Photo: Rich Miller)

Livermore was asked to determine if the helmets used by American soldiers are safer than those used by NFL football players (good news: they are). By using Livermore’s high-powered HPC systems to run simulations, Crawford’s team was able to determine that adding just one-eighth of an inch of additional padding to combat helmets would provide a 24 percent improvement in the protection offered to U.S. soldiers on the battlefield. “People's lives depend on our computing technologies,” said Crawford. “Game-changing solutions require relentless advancements in computing power.”

SC14 general Chair Trish Damkroger says HPC-powered data analysis can help predict the path and intensity of "storms of freakish power and size" like Superstorm Sandy. (Photo: Rich Miller)

Another focus of HPC research is meteorology, particularly the ability to track storms of “freakish strength and power,” Damkroger noted. Storms like Superstorm Sandy underscore the need for detailed predictive models that can calculate not only the course of a hurricane, but also its power at landfall – which historically has been a harder computational challenge. Identifying whether storms are likely to strengthen or weaken prior to landfall can guide public officials in evacuation strategies, where advance notice is critical as more Americans reside in coastal areas.

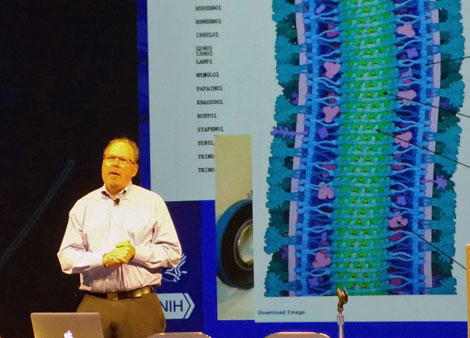

Philip Bourne, Associate Director for Data Science at the National Institutes of Health, displays an analysis of the proteins in the Ebola virus that was accelerated through the use of high performance computing. Bourne spoke in one of the plenary sessions at last week's SC14 conference. (Photo: Rich Miller)

Advances in biomedical research were a key theme at SC14. HPC can bring powerful analytical tools to bear on large datasets of medical information, whether from patient records or clinical trials. With the cost of developing a new pharmaceutical project now estimated at more than $1 billion, drug companies are keen to find ways to refine their data crunching and analysis to focus their investment on the most promising technologies.

Philip Bourne, Associate Director for Data Science at the National Institutes of Health, said the NIH plans to create an ecosystem for biomedical research data, in which large datasets can be linked and analyzed using APIs and standards. He said the NIH is weighing whether this system will be opened to commercial cloud platforms, a step which could drive cost efficiencies, and represents a major opportunity for cloud service providers. The NIH includes 27 institutes and centers that collectively spend more than $1 billion a year on computing resources.

“HPC is going to be an integral part of the health care system,” said Bourne. “We’re now talking about precision medicine."

Damkroger put a finer point on HPC's potential impact on healthcare. "I want my children to live in a world in which our most daunting diseases can be cured.”

That's the kind of connection at the heart of the "HPC Matters" theme. The conference committee hopes to further reinforce the value of supercomputing with the theme for the SC15 event in Austin: "HPC Transforms."