First developed by IBM in the 1960s to cool mainframes, the use of direct liquid cooling in data centers was going strong until the 80s, when complementary metal oxide semiconductors (CMOS) were invented. These chips could crunch through a lot more numbers per watt, and liquid cooling took a back seat to lower-cost cooling systems that used air for heat transfer.

Today, however, the idea of bringing liquid coolant directly to the heat source in the data center is enjoying somewhat of a renaissance, according to a recent report by 451 Research, titled Liquid-Cooled IT: A Flood or Disruption for the Data Center? While far from mainstream, direct liquid cooling has become a business-case foundation for several new companies, and the amount of data centers with cooling requirements close to those of high performance computing is growing.

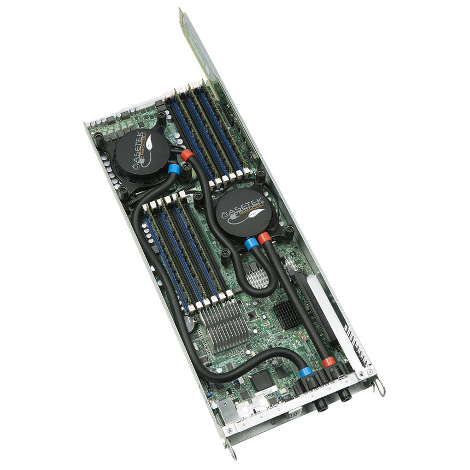

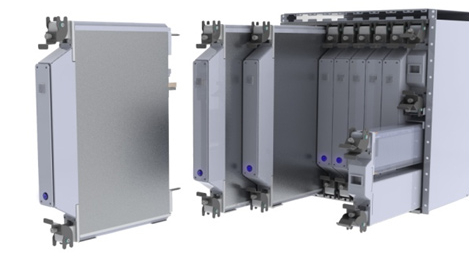

A UK company called Iceotope, which floods an insulated server chassis with dielectric fluid, was founded in 2009, received several industry awards and raised $10 million in funding earlier this year. Another company, Denmark’s Asetek, which brings coolant directly to the processor via a small pipe, had a successful IPO on Oslo Stock Exchange in 2013. There is also a number of U.S.-based companies in the space, including LiquidCool Solutions and Green Revolution Cooling. Overall, 451 counted between 15 and 20 suppliers that have products that qualify as direct liquid cooling. Most of the smaller ones make from $5 million to $10 million in annual sales.

One of the biggest suppliers of dielectric fluid for liquid cooling of electronics is 3M. Its fluid is called Novec.

The big IT vendors have not lost interest in the technology either. IBM continues doing R&D in the space, and so do Fujitsu, HP, Dell and Intel. While the majority of these efforts are focused on the HPC market, there are signs of increasing use of “HPC-like systems” by enterprises. Driven by Big Data and other demanding applications, this trend could potentially open a door for direct liquid cooling vendors to help companies stand up high-performance systems in their existing traditional enterprise data centers.

Not the most popular kid in school

While the companies’ approaches to direct liquid cooling vary, the concept of making cooling more efficient by using a more efficient heat-exchange medium and by coupling cooling more closely with the heat source is the same, and so are barriers to adoption. Customers worry about repairs and maintenance of the systems, lack of standards and the general risk of introducing any disruptive technology.

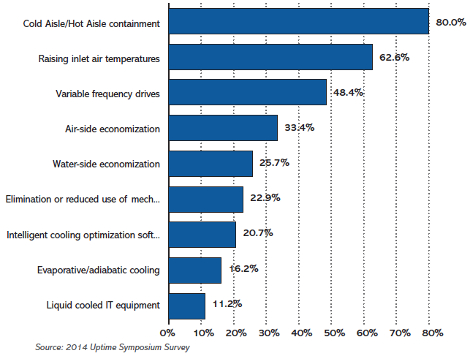

The report cites a survey conducted by the Uptime Institute, a 451 Group subsidiary, saying that of all cooling technologies considered “advanced,” adoption of liquid-cooled IT was the lowest: 11 percent of the whole. The category includes things like cold-aisle and hot-aisle containment, higher inlet-air temperatures, variable frequency drives, air-side economization and water-side economization, among others.

Here’s a detailed look at Uptime’s results:

Direct liquid cooling has a number of market trends working in its favor, however. One of them is the emergence of the web-scale data center operator category. Companies like Facebook and Google have “legitimized a less conservative approach to the design and operation of facilities and paved the way for use of technologies such as [direct liquid cooling] by other operators,” Andrew Donoghue, European research manager at 451 and the report's author, wrote.

Rack densities not going up as expected

What this technology does not have going for it is the industry’s overall power-density trend. Rack densities are not going up as quickly as they were expected to a few years ago. Median rack density in enterprise and colocation data centers is lower than 5 kW per rack, the densest racks reaching 8 kW, according to the Uptime Institute. Such densities are well within air-based cooling systems’ capabilities, making the business case for direct liquid cooling not as compelling.

Other barriers to adoption include fear of bringing liquid into an environment with lots of electronics and high-voltage power. There are also more and more data centers that to a great extent rely on outside air for cooling and run at higher ambient temperatures than normal. This is both good and bad for liquid cooling. On one hand, it rules out use of liquid cooling for low-density environments, which are much cheaper to cool using outside air. On the other hand, if operator of a low-density data center that is using outside air wants to install some high-density racks, they can do so using direct liquid cooling without affecting the rest of the environment.

There are other concerns, such as growing interest in low-power servers, powered by ARM or Intel's Atom chips, and introduction of a single point of failure in the form of cooling-liquid supply to a rack, but one of the biggest barriers is all the investment companies have already made in air-cooled data centers. “Operators do not want to risk moving to an unknown technology despite its efficiencies, and suppliers are reluctant to jeopardize sales of existing air-based equipment,” the report's author wrote.

Physics will not be enough

As Donoghue put it, “Even air-cooling apologists will admit that if the clock could be turned back liquid cooling is a more efficient way to cool data centers. Compelling physics alone, however, is not enough for most data center operators.”

Success of the technology will depend on continued backing from the IT vendors the industry trusts – the Dells, HPs and IBMs of the world – and whether they, web-scale data center operators and HPC-facilities operators, will demonstrate business benefits of direct liquid cooling compelling enough for the rest of the industry.