Winston Saunders has worked at Intel for nearly two decades in both manufacturing and product roles. He has worked in his current role leading server and data center efficiency initiatives since 2006. Winston is a graduate of UC Berkeley and the University of Washington. You can find him online at “Winston on Energy” on Twitter.

WINSTON SAUNDERS

WINSTON SAUNDERSIntel

The fun of being a data nerd is the irrepressible urge to look at facts in different ways on the whim of gaining insights previously not evident.

The other day I was thinking about SPECpower data. I wondered if looking at the separate roles of power reduction and time reduction in overall compute efficiency might better convey what was happening.

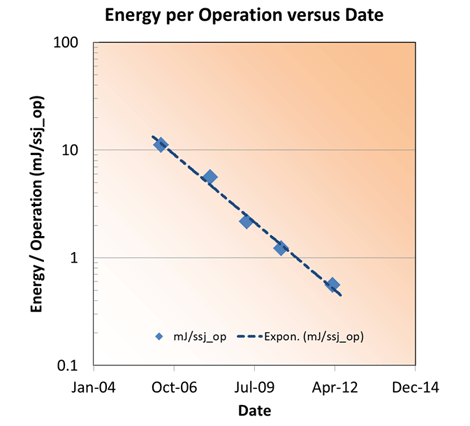

By now you should be familiar with the “Evolution of Energy Efficient Performance," which reveals trends in server platform energy proportionality as measured by the SPECpower benchmark. You can refer to this chart, if you aren’t familiar with the trend.

How Have These Improvements Been Accomplished?

What is less obvious are the means by which energy efficiency of computing is improved. To understand this I thought I’d look at the data somewhat differently. Assuming a 10% of peak workload, we can consider the time per operation (which is the reciprocal of operations per second) and the power in the following graph.

Each data point is labeled for correspondence to the first figure. What is interesting is the stair-step pattern. From 2006 to 2008, we moved from 65nm to 45nm silicon technology, and, from 2009 to 2010, the change continued downward from 45 nm to 32nm silicon technology. In each case, the time to complete an operation decreased by about half. Complementing that, from 2008 to 2009, and from 2010 to 2012, were significant micro-architecture changes. These resulted in time reductions associated with performance gains, but also significant power reductions.

What I like about this graph is the overall improvement in energy efficiency is easily visualized as the product of time and power. These are shown for 2006 and 2012 as the rectangles in the graph. The area of the rectangle is the energy per operation and the impacts of proportional power architecture and performance on overall efficiency are evident.

The trend of energy per operation follows a strong trend versus the “system available” date from the SPECpower data. The exponential fit is equivalent to a 41% per year reduction in the energy per operation and about a factor of twenty over the range shown. Imagine if we could tap into that kind of energy reduction per mile driven or lumen generated by a lightbulb. Just to put the energy into a framework, 0.5 milli-Joules is the energy needed to light a 100Watt bulb for about 5 microseconds.

So to summarize, the efficiency of two-socket servers has increased dramatically. The efficiency gains result from the increased performance and energy proportionality of the systems. Transitions in transistor architecture result in large performance gains resulting in a reduction in the time necessary to complete a given workload. Transitions in system architecture also result in performance gains, but in addition lower the power consumption while the operation is being completed. This in some sense “doubles” the efficiency gains expected. This efficiency gain is easily visualized by looking at the time to complete an operation and the power used during that time.

Over the last few years, the tremendous focus to improve the efficiency of servers under realistic workload scenarios has resulted in a 40% per year reduction in the energy per operation.

These kinds of efficiency gains, I would claim, are a fundamental element driving our digital economy; a kind of “Jevon’s Paradox" on steroids.

Industry Perspectives is a content channel at Data Center Knowledge highlighting thought leadership in the data center arena. See our guidelines and submission process for information on participating. View previously published Industry Perspectives in our Knowledge Library.