Raghu Kondapalli is director of technology focused on Strategic Planning and Solution Architecture for the Networking Components Division of LSI Corporation. He brings a rich experience and deep knowledge of the cloud-based, service provider and enterprise networking business, specifically in packet processing, switching and SoC architectures.

RAGHU KONDAPALLI

RAGHU KONDAPALLILSI Corp.

This Industry Perspectives article is the second in a series of three that analyzes the network-related issues being caused by the Data Deluge in virtualized data centers, and how these are having an effect on both cloud service providers and the enterprise. The focus of the first article was on the overall effect server virtualization is having on storage virtualization and traffic flows in the datacenter network. This article dives a bit deeper into the network challenges in virtualized data centers as well as the network management complexities and control plane requirements needed to address those challenges.

Server Virtualization Overhead

Server virtualization has enabled tens to hundreds of VMs per server in data centers using multi-core CPU technology. As a result, packet processing functions, such as packet classification, routing decisions, encryption/decryption, etc., have increased exponentially. Because discrete networking systems may not scale cost-effectively to meet these increased processing demands, some changes are also needed in the network.

Networking functions that are implemented in software in network hypervisors are not very efficient, because x86 servers are not optimized for packet processing. The control plane, therefore, needs to be scaled somehow by adding communications processors capable of offloading network control tasks, and both the control and data planes stand to benefit substantially from hardware assistance provided by such function-specific acceleration.

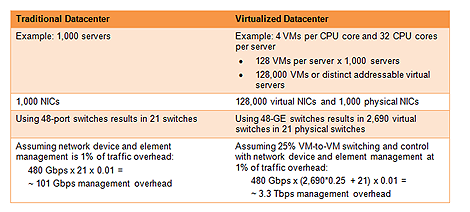

The table below shows the effect on packet processing overhead of virtualizing 1,000 servers. As shown, by mapping each CPU core to four virtual machines (VMs), and assuming 1 percent traffic management overhead with a 25 percent east-west traffic flow, the network management overhead increases by a factor of 32 times in this example of a virtualized data center.

Click to enlarge chart.This table shows the effect on network management overhead of virtualizing 1,000 servers.

Click to enlarge chart.This table shows the effect on network management overhead of virtualizing 1,000 servers.

Virtual Machine Migration

Support for VM migration among servers, either within one server cluster or across multiple clusters, creates additional management complexity and packet processing overhead. IT administrators may decide to move a VM from one server to another for a variety of reasons, including resource availability, quality-of-experience, maintenance, and hardware/software or network failures. The hypervisor handles these VM migration scenarios by first reserving a VM on the destination server, then moving the VM to its new destination, and finally tearing down the original VM.

Hypervisors are not capable of the timely generation of address resolution protocol (ARP) broadcasts to notify of the VM moves, especially in large-scale virtualized environments. The network can even become so congested from the control overhead occurring during a VM migration that the ARP messages fail to get through in a timely manner. With such a significant impact on network behavior being caused by rapid changes in connections, ARP messages and routing tables, existing control plane solutions need an upgrade to more scalable architectures.

Multi-tenancy and Security

Owing to the high costs associated with building and operating a data center, many IT organizations are moving to a multi-tenant model where different departments or even different companies (in the cloud) share a common infrastructure of virtualized resources. Data protection and security are critical needs in multi-tenant environments, which require logical isolation of resources without dedicating physical resources to any customer.

The control plane must, therefore, provide secure access to data center resources and be able to change the security posture dynamically during VM migrations. The control plane may also need to implement customer-specific policies and Quality of Service (QoS) levels.

Service Level Agreements and Resource Metering

The network-as-a-service paradigm requires active resource metering to ensure SLAs are maintained. Resource metering through the collection of network statistics is useful for calculating return on investment, and evaluating infrastructure expansion and upgrades, as well as for monitoring SLAs.

The network monitoring tasks are currently spread across the hypervisor, legacy management tools, and some newer infrastructure monitoring tools. Collecting and consolidating this management information adds further complexity to the control plane for both the data center operator and multi-tenant enterprises.

The next article in the series will examine two ways of scaling the control plane to accommodate these additional packet processing requirements in virtualized data centers.

Industry Perspectives is a content channel at Data Center Knowledge highlighting thought leadership in the data center arena. See our guidelines and submission process for information on participating. View previously published Industry Perspectives in our Knowledge Library.