The promise of hyperconverged infrastructure is for data center hardware to include the provisioning tools needed to make workloads run across the entire platform, no matter how big it eventually gets. If hyperconvergence ends up winning the data center, it will be because open source alternatives did not step up to the plate in time.

With announcements of the latest innovations to VMware’s venerable virtualization platform just one week away, and with OpenStack becoming the focus of much of Red Hat’s development efforts, the question gets asked once again: Will Red Hat adjust its strategy for its enterprise virtualization platform, this time to keep up with the advances in hyperconvergence?

See also: Why Hyperconverged Infrastructure is So Hot

Wednesday morning brought Red Hat’s latest answer, in what is nearly becoming an annual event for the company: For version 4 of Red Hat Virtualization, the word “Enterprise” gets dropped from the platform’s title, and its mission becomes more focused upon establishing a single base layer for software-defined infrastructure for customer data centers.

“In this modern data center there shouldn’t be multiple software-defined infrastructures,” Scott Herrod, product manager for Red Hat Virtualization, told Data Center Knowledge. “There should be one, and Red Hat is trying to consolidate and unify that. So whether your workloads are running in Red Hat Virtualization, OpenStack, or Atomic Hosting and OpenShift platform, you don’t need to worry about differences in the infrastructure and having to change policies and procedures around how you effectively manage that infrastructure.”

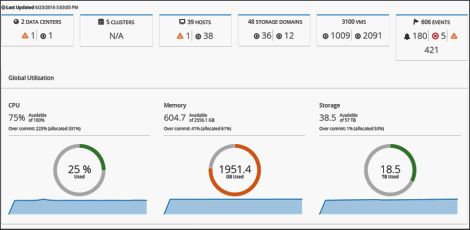

Put another way, Red Hat is aiming to produce a single control point for administrating software-defined infrastructure (SDI) — the resources that comprise spinning up virtual workloads on private and hybrid clouds, such as compute, storage, and bandwidth. In time, Herrod explained, it wants to provide a unified control center for managing OpenStack, OpenShift, and KVM hypervisor-driven workloads.

See also: Five Myths About Hyperconverged Infrastructure

But accomplishing that means removing the separations between the SDN networks established by all these platforms, so that they effectively run on the same layer. That’s not an easy task, and as Herrod admits, RHV 4 (we’d better get used to it without the “E”) is only on the start of this journey.

Neutron Bomb

With previous editions of RHEV, Red Hat had been tinkering with experimental support for Neutron, OpenStack’s native networking component — specifically with building SDN bridges between the two platforms, as well as with Open vSwitch for hypervisor-style virtualization. But that methodology required fully embracing Neutron. In turn, explained Herrod, that mandated that RHEV customers support at least half of the rest of OpenStack, including the Keystone authentication component and a message queue such as OpenStack’s Marconi.

“It was unfriendly to the traditional virtualization user, and we got a lot of push-back,” Herrod admitted. While Neutron had the benefit of being a shiny new technology that generated a lot of interest, compelling the vendors who support RHEV to produce software drivers that addressed these intricate bridges proved “counter-productive.”

What’s more, certain customers in the financial sector (he would not name names, but we got clues that their Fortune numbers were in the low double-digits) refused to deploy infrastructure that mandated multiple logical networking layers.

So RHV 4 will continue to support Neutron as an option, because that’s the path that RHEV started paving. But Herrod was emphatic that RHEV’s customers were soundly rejecting the stratification of the data center into multiple layers. As the company’s documentation for version 3 explained it, virtual machines had virtual network interface cards (vNICs), so one layer connected them all. But another layer had to be designated for logical devices that were not VMs, and did not have vNICs or virtual bridges associated with them. Then there were cluster layers to manage the images of the networks that data center managers actually saw, and data center layers to converge the cluster layers.

This list didn’t even include the separate layer that OpenShift PaaS users thought they required for managing Docker containers, and the Kubernetes network above that. “The answer is a resounding ‘No,’” said Herrod.

The Shim Layer

With RHV 4, he said, Red Hat has constructed a new shim layer, for customers who happen to be utilizing any kind of third-party platform where overlay networks enter the picture. Docker, Kubernetes, and overlays, such as Weave, come to mind on the software side, but also Cisco’s ACI, Nuage Networks, Midokura.

“What we can do within Red Hat Virtualization,” Herrod explained, “without having to write specific drivers for every vendor or every vendor having to explicitly write code to integrate with RHV, we’re able to take that API call and translate that into the Open vSwitch configuration that’s required to deliver the overlay networks, based on the external control planes. We have a common layer to interface with our API, as well as with the Neutron API; and they can consolidate their software-defined networking using open, proprietary, or third-party SDN technologies.”

It’s not a universal layer as of yet, he admits. There’s work underway to reach out to OpenDaylight and OVN, he said, to present open source alternative methodologies for integrating proprietary network components and appliances.

Herrod's team is also looking for opportunities to apply something similar to this shim layer concept for storage. Up to now, RHEV had been using Cinder, the OpenStack component for persistent block storage, especially for large database volumes. But last October’s acquisition of infrastructure provisioning service Ansible is making it feasible for Red Hat’s forthcoming single management layer to orchestrate and automate a single storage plane “to help avoid storage reconfiguration, and some of the complexity behind it.” Presently, that project is in the experimental phase.

But Red Hat can’t afford for these experiments to remain in the laboratory for too long, as server makers — most notably HPE, Cisco, Dell, and Lenovo with Nutanix — are quickly gaining ground in the race to secure the lowest layer of the hyperconverged data center.

Read more: Nutanix Certifies Cisco UCS for Its Hyperconverged Infrastructure