Like energy, growth in data center water consumption in the US has slowed down since about a decade ago.

A recent US government study for the first time made an attempt to quantify water consumption of all data centers in the country. The study focuses primarily on data center energy consumption, but it also uses its electricity consumption estimates to extrapolate the amount of water it takes to power and cool data centers.

Water is one of two major resources data centers consume, and this fact drew a lot of public attention last summer, as the drought in California grew especially acute. While, thanks to this past winter’s El Niño, water levels in the state’s reservoirs are higher than they have been in years, the drought continues, and water consumption by the state’s various industries, including the high-tech industry, continues to be an important issue.

The data center industry is far from being California’s biggest water consumer. In 2010, the biggest portion of daily water withdrawals (about 61 percent) was used for irrigation, according to the most recent data available from the US Geological Survey.

Generation of electricity, however, is a major water consumer, and data centers use a lot of electricity. In 2014, data centers were responsible for 2 percent of all electricity consumed in the US, according to the recent government study.

In 2010, the second-largest portion of daily water withdrawals in California (about 17 percent) went to thermoelectric power generation, according to USGS. While data centers consume relatively little water directly – compared to farming or other industries – the water it takes to generate electricity to power them has to be taken into account as well.

Read more: Here’s How Much Electricity All US Data Centers Consume

The study, whose results were published in a report in June, was conducted by the US Department of Energy’s Lawrence Berkeley National Laboratory, in collaboration with researchers from Stanford University, Northwestern University, and Carnegie Mellon University.

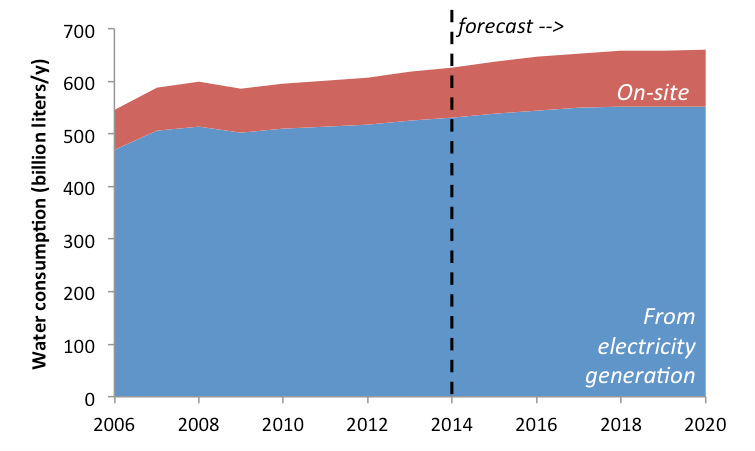

As the report points out, far more water is used to generate electricity that powers data centers than to cool them. It takes about 7.6 liters of water on average to generate 1kWh of energy in the US, while an average data center uses 1.8 liters of water for every kWh it consumes, according to the researchers.

Combined, US data centers were responsible for consumption of 626 billion liters of water in 2014, which includes both water consumed directly at data center sites and water used to generate the electricity that powered them that year. The researchers expect this number to reach 660 billion liters in 2020:

Direct versus indirect US data center water consumption. Source: United States Data Center Energy Usage Report, LBNL, 2016

The government’s estimates rely on averages because water consumption per 1kWh at different data center sites varies greatly, depending on the climate and the type of cooling system used, and so does water consumption associated with energy generation. Different generation sources use different amounts of water, and some power plants are more efficient than others.

The estimates take into account water losses at thermoelectric and hydroelectric plants, as well as losses at data center cooling towers due to drift and blowdown. They also assume that IT closets and IT rooms don’t consume water, being cooled by direct-expansion, or air-cooled chillers.

See also: How Much Water Do Apple Data Centers Use?

Trends in data center water consumption between 2006 and 2020 mirror closely trends in data center energy consumption described in the report (which isn’t surprising, since energy consumption was used to calculate water consumption). Hence, just like with energy, data center water consumption has been growing at a slower rate than it did before 2007.

Also, similar to energy trends, as hyperscale data centers built by internet and cloud giants like Google, Facebook, Microsoft, and Amazon constitute a bigger and bigger portion of total data center capacity, they will be responsible for a progressively bigger portion of the industry’s total water consumption.

Total US data center water consumption by space type. Source: United States Data Center Energy Usage Report, LBNL, 2016

Read the full DOE report here.