Most times, "underwater" and "data center" are only together in a sentence about the financial condition of a failed company, not computers actually covered by liquid. Yet, Microsoft has gotten great attention from the experiment they publicized in January, putting a “capsule” containing computers 30 feet underwater for 105 days. People appear to be fascinated with the idea of underwater data centers, an idea that conjures up images from Jules Verne's Voyage to the Bottom of the Sea.

Don’t get me wrong, I like the boldness of the idea and the innovation required to tackle Project Natick. But since we’re in the election season in the US, let’s do some fact checking to see whether this idea can do more than tread water.

The virtues proposed by Microsoft researchers and industry analysts include reduced cooling costs; the ability to use clean, renewable tidal energy; lower latency and better application performance for the 50 percent of the world’s population that lives within 200km of the ocean; and reduced deployment time of mass-produced capsules, from years to weeks.

So what are the issues that have not been addressed so far?

People Hate Putting Things in the Water

Americans, at least, seem to have a hard time accepting companies putting things in the water near the coast, delaying implementations by years while special interests argue the virtues and detriments.

One of the most extreme examples is the Cape Wind Project, proposing wind turbines 10 miles off the Massachusetts shore. The project’s first proposal came in 2001, 15 years ago, with everyone from business operators to celebrities opposing it at every step.

On the West Coast, plans for Seattle’s first tidal-energy project were scrapped in January 2016 after the Snohomish County Public Utility District invested eight years and at least $3.5 million in developing it. These types of delays are commonplace when anybody proposes deploying anything in the ocean or rivers.

Environmental Impact

On the good side of the environmental discussion, Microsoft’s engineers noted that sea life around the capsule quickly adapted to its presence. The artificial reef aspect of an underwater cluster of capsules could be beneficial, providing habitat for sea creatures in the local area.

But what about the warming of the area around the capsules? Microsoft’s video on Project Natick said the 10 foot by eight foot cylinder was consuming 27 kilowatts of power during their tests. For the sake of argument, we’ll assume the production version of the capsule is three times larger, or 14 feet in diameter and 10 feet high. Each capsule would have room for three of the racks used in Project Natick and would reject about 80kW of heat each.

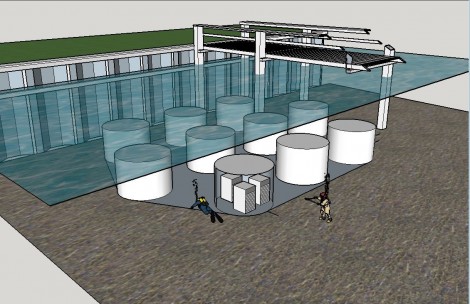

Rendering of what a production deployment of Microsoft's Project Natick may look like (Credit: Mark Monroe)

Capsules placed on the ocean floor in an open pattern would allow water to flow around them, drawing away the waste heat. Ten capsules would throw off 800kW of heat each hour and might raise the temperature of the water around them by about 0.7C every hour if the water is stagnant. The walls of the capsules will be warmer than the surroundings, which will create microclimates around the vessels and may attract unexpected species.

Tidal Power

According to a New York Times article, “the project [could] harvest electricity from the movement of seawater. This could mean that no new energy is added to the ocean and, as a result, there is no overall heating.” True, the First Law of Thermodynamics says the overall balance of energy in the ocean would stay the same, but energy would be converted from mechanical energy of moving water to thermal energy of waste heat, so it is likely the temperature around the capsule farm would register some increase in temperature.

And what happens at slack tide if the underwater data centers are running on tidal energy? Twice per day, the kinetic energy of the tides would dwindle to zero, and the installation would have to be energized by more conventional power sources.

Modular Advantage

Microsoft also estimated they could mass-produce the capsules and deploy them within 90 days of demand for capacity. Land-based modular data centers have been in production since Sun’s Project BlackBox in 2006 and are well established in their ability to deliver capacity in a short time. No advantage over land-based deployment there.

The cost of water-tight pressure vessels is likely 10 to 20 times higher than Microsoft’s ITPAC containers, and the cost of placing capsules underwater is much higher than the equivalent operation in Cheyenne or Northlake.

The company H2OMES makes underwater domiciles, and estimates the installed cost of their product at over $3,100 per square foot. Compare that to the average US home at $129 per square foot and it makes the underwater construction 24 times more expensive than land based. Not compelling.

No Time Soon

There are other concerns: cost of operations, security, connectivity, power, operation of sensitive cooling equipment in harsh salt water environment. The reality is we will not see underwater data centers for a very long time, if ever. We’ve spent the last 15 years aggregating compute resources into big, efficient facilities that can be located anywhere the speed of light can reach, and that will be the method, combined with land-based edge centers, for the foreseeable future.

About the author: Mark Monroe is president at Energetic Consulting. His past endeavors include executive director of The Green Grid and CTO of DLB Associates. His 30 years’ experience in the IT industry includes data center design and operations, software development, , professional services, sales, program management, and outsourcing management. He works on sustainability advisory boards with the University of Colorado and local Colorado governments, and is a Six Sigma Master Black Belt.