Facebook wants to replicate the success of its open source server design efforts in hardware for artificial intelligence. The social network announced it plans to contribute designs of the latest generation of custom high-performance servers it uses to build neural networks to the Open Compute Project, the open source hardware initiative Mark Zuckerberg claimed last year had saved it $1.2 billion.

Facebook’s new AI hardware is powered by GPUs, processors built originally to run graphics but increasingly used in high-performance computing. The company’s latest AI server, called Big Sur, packs eight of Nvidia’s Tesla GPUs, each consuming up to 300 watts.

Big Sur is twice as fast as Facebook’s previous-generation AI servers. Neural networks are trained by processing data. To recognize an object in an image, for example, a neural network has to analyze lots of different images containing the same object, and the more of them it gets to “see,” the better it gets at recognizing the object. Doing this kind of “training” across eight GPUs enables Facebook to build neural networks that are twice the scale and speed of networks it built using its older off-the-shelf hardware, Facebook engineers Kevin Lee and Serkan Piantino wrote in a blog post.

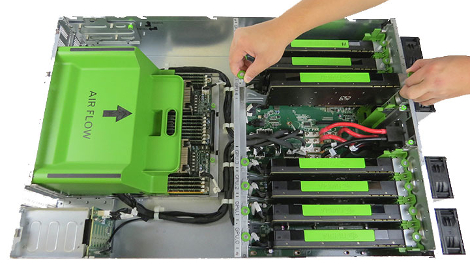

Facebook's Big Sur server for artificial intelligence (Image courtesy of Facebook)

GPU-assisted architectures are increasingly common in supercomputers. The presence of GPUs on Top500, the list of the world’s fastest supercomputers, has been growing steadily. More than half of all systems on the latest list – published last month – relied on Nvidia GPUs as co-processors. That’s up from less than 15 percent one year ago.

Big Sur servers are compatible with Open Rack, the custom server rack Facebook designed and contributed to the Open Compute Project. The AI server can run in the company’s regular data centers, which is unusual for high-performance computers, which often need specialized high-capacity cooling systems that often use liquid instead of air as the heat-exchange medium.

Big Sur is designed for easy serviceability. All individual parts except CPU heat sinks can be replaced without tools. Image courtesy of Facebook

Facebook saves on infrastructure through Open Compute in multiple ways. Because the hardware is “vanity-free” and optimized for its applications, it is cheaper. By open sourcing the design, the company has multiple suppliers, including both traditional vendors, such as HP Enterprise, and design manufacturers, such as Quanta or Hyve, compete for its business on price alone, since the design is the same.

The company hasn’t open sourced Big Sur yet, but Lee and Piantino wrote that it plans to submit the design materials to OCP. Facebook has been open sourcing its AI software code and publishing papers on its discoveries in the field.