Charles Doughty is Vice President of Engineering, Iron Mountain, Inc.

We’re all familiar with Moore’s Law, stating that the number of transistors on integrated circuits doubles approximately every two years. Whether we measure transistor growth, magnetic disk capacity, the square law of price to speed versus computations per joule, or any other measurement, one fact persists: they’re all increasing and doing so exponentially. This growth is the cause of density issues plaguing today’s data centers. Simply put, more powerful computers generate more heat which results in significant additional cooling costs each year.

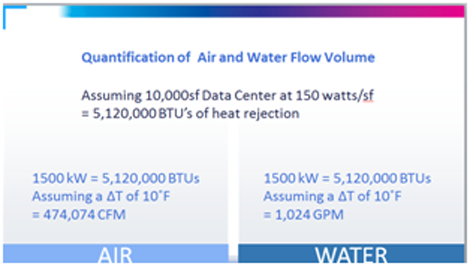

Today, a 10,000 square-foot data center that is running about 150 watts per square foot costs roughly $10 million per megawatt to construction, depending on location, design and cost of energy. If the approximately 15 percent rate of data growth of the last decade continues over the next decade, that same data center would cost $37 million per megawatt. A full thirty percent of these costs are related to the mechanical challenges of cooling a data center. While the industry is experienced with the latest chilled water systems, and high-density cooling, most organizations aren’t aware that Mother Nature can deliver the same results for a fraction of the cost.

The Efficiency Question: Air vs. Water

Most traditional data centers rely on air to direct cool a data center. When we analyze heat transfer formulas, it turns out water is even more efficient at cooling a data center, and the difference is in the math, namely the denominator:

With the example above, the energy consumed by the 10,000 square-foot data center creates over 5 million BTUs of heat rejection. Using the formulas in the figures above and assuming a standard delta T of 10 degrees, this data center would require more than 470,000 cubic feet per minute (CFM) of air to cool that facility, but only 1,000 gallons of water per minute. In order to cool this data center, the system would need between 150-200 horsepower to convey that many cubic feet of air per minute, but only 50-90 horsepower to convey 1,000 gallons per minute – roughly 462 times more efficient! If analyzed on a per cubic foot basis – one cubic foot of air to one cubic foot of water, water is actually about 3,400 times more efficient than air.

Physics 101: The Thermodynamics of the Underground

However, for an underground data center, there’s more at work. In a subterranean environment, Mother Nature gives you a consistent ambient temperature of 50 degrees. (So to begin with, you don’t have to depend on cooling systems as much since it is cool to start. Then you can get further efficiencies by using an underground water source or aquifer.)

The ideal environment for a subterranean data center is made of aquifers, or stone that has open porosity like basalt, limestone and sandstone; aquicludes, such as dense shales and clays, will not work as effectively. In a limestone subterranean environment, heat rejection can increase from 4 to 500 percent because of the natural heat sink characteristics of the stone. The most appealing implication here is that the stone can manage the energy fluctuations and peaks inherent to any data center. (the limestone absorbs heat which further reduces the need for cooling)

As the water system funnels 50 degree water from the aquifer to cool the data center, the heat is rejected into the water which is then funneled back about 10 degrees warmer. Mother Nature deals with that heat by obeying the second law of thermodynamics which governs equilibrium and the transfer of energy. For the subterranean data center operator, this means working within the conductivity of the surrounding rock, thus it is important to be knowledgeable of the lithology and geology of the local stratus, along with understanding, the effects of a continuous natural water flow and the psychrometric properties of air.

The Cost of Efficiency

Of course, there are other data center cooling strategies being used aside from the subterranean lake designs including well systems, well point systems and buried pipe systems to name a few. Right now, well systems are being used in Eastern Pennsylvania to cool nuclear reactors producing hundreds of megawatts of energy with mine water. Well point systems are generally used in residential applications, but the concept doesn't scale well without becoming prohibitively expensive. Buried pipe systems are used quite a bit and require digging a series of trenches backfilled with a relatively good conductive granular material, but beyond 20-30 kilowatts, this method does not scale well.

How much cost do each of these methods incur? An underground geothermal lake design will cost less than $500 per ton, while well-designed chill water systems range from $2,000-4,000 a ton. The discrepancy in cost is created by the mechanics – in a geothermal lake, there are no mechanics: water is simply pumped at grade. Well and buried pipe systems can cost more than $5000 a ton, and these systems do not scale very well.

By understanding Mother Nature and using her forces to our advantage, we can increase the capacity and further improve on the effectiveness of the geothermal lake design. By drilling a borehole from the surface into the cavern, air transfer mechanisms can easily be incorporated; anytime the air at the surface is at or below 50°, that cool air will to drop into the mine. Even without motive force or air handling units, a four to five foot borehole can contribute about 30,000 cubic feet of air per minute! If an air handling unit is add, the 30,000 CFM of natural flow can easily become 100,000-200,000 CFM. What was a static geothermal system is now a dynamic geothermal cooling system with incredible capacity at minimal incurred cost.

Opportunities for the Future

When analyzing and predicting what data centers are going to look like in the future, a recurring theme starts to emerge: simplicity and lower-cost. Because of the cost pressures facing IT departments and CFOs alike, underground data centers using hybrid water, air and rock cooling mechanisms are an increasingly attractive option.

There are even opportunities to turn these facilities into energy creators. For example, by adding power generating turbines atop boreholes, operators can harness the power of heat rising from the data centers below. Furthermore, by tapping into natural gas reserves, subterranean data centers could become a prime energy source, thus eliminating the need for generators and potentially achieving a power usage effectiveness measurement of less than one. The reality is that if you know Mother Nature well, you can work with her – she's very consistent – and the more we learn, the more promising the future of data center design looks.

Industry Perspectives is a content channel at Data Center Knowledge highlighting thought leadership in the data center arena. See our guidelines and submission process for information on participating. View previously published Industry Perspectives in our Knowledge Library.