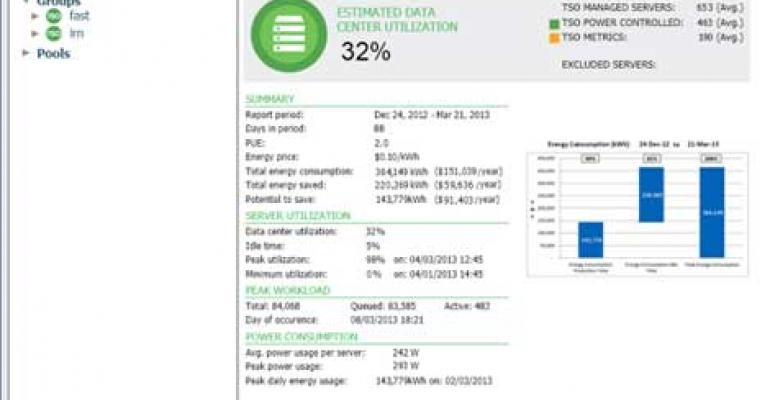

A screen shot from the TSO Logic energy management software, which launches today.

The first wave of innovation in data center efficiency focused on energy management between the grid and the rack. The next wave is focusing on managing power usage on the server, and optimizing for specific applications. That's the goal of TSO Logic, which has launched its new energy efficiency software for data centers.

TSO Logic is advancing "Application Aware Power Management," with software that analyzes activity at the application level and manages power usage at the server level, throttling idle servers or turning them off completely.

“Most data centers leave all of their servers on all of the time, regardless of the actual demand," said Aaron Rallo, CEO and founder of TSO Logic. "We think that’s like leaving your car running all day just in case you have to drive somewhere. I started TSO Logic after first-hand experience running businesses that relied on large-scale server farms. Server demand would go up and down, but our huge power bill was always the same. The lack of data, insights, and viable solutions was frustrating."

Recognition from Uptime

The company says the software can cut server power costs by more than 50 percent, and gives visibility into how data center power dollars are spent. TSO Logic's claims got early validation from the Uptime Institute, which awarded the company a Green Enterprise IT Award for a pre-release deployment of its solution with Toronto-based digital media studio Arc Productions. The TSO Logic product suite identified 56 percent potential server energy savings at the studio’s data center, which houses more than 600 servers. Now Arc Productions is using TSO Power Control to realize those savings.

Server power capping isn't new, and has been available for years vendors including Intel and HP. There are other software players in this space, including Emerson Network Power, whose Trellis DCIM software is being used by Joyent to track app-level power usage.

The history on power capping and management has been that it offers great potential to improve efficiency, but some companies have been apprehensive about turning servers off or down to take advantage of the savings. TSO's approach seeks to create a comfort level for customers with very granular management functions, allowing fine-tuned control over which servers are monitored, what days and times those servers are managed, and how aggressively they want to save energy. Relevant data is displayed on a dashboard that allows graphing of the data over longer periods of time.

"It makes a great tool for an IT guy to hand the data to the CFO and say 'here's what's trending, here's what going on," said Rallo.

Targeting Variable Workloads

TSO Logic says it is already managing 2,165 enterprise servers, and helping these pre-release customers save on server power costs while reducing their environmental footprints. The company believes it can bring significant cost savings to anyone with variable workloads such as content providers, digital animation, retailers, as just a few examples.

The majority of data centers experience what is known as variable load, which simply means that the demand on servers varies from hour to hour. The problem is that in order to stay prepared for periodic surges - "peak demand" or "peak load" - these data centers must keep running at full capacity all of the time. This means that energy is wasted on idle servers, which TSO aims to address.

The product suite consists of TSO Metrics and TSO Power Control. The company’s two software toolsets are deployed together in one install, typically on a single server. It has no negative impact on server performance and requires no significant changes to data center infrastructure. The software uses application-level inspection to determine how much of a data center’s power draw is going toward revenue-generating activities versus idle servers. The insight is supposed to drive electricity savings without sacrificing performance, through automatically controlling the power state of servers based on application demand.