Craig Watkins is Tripp Lite Product Manager for Rack and Cooling Solutions.

CRAIG WATKINS

CRAIG WATKINSTripp Lite

Energy efficiency in the data center used to be an afterthought, if it was on the radar at all. It made sense at the time. If your data center felt uncomfortably cold, people were impressed. It was considered a sign that you were doing a good job keeping servers from overheating. Rack densities were lower, electricity was cheaper and nobody was paying attention to the electric bill.

Today, data center managers need to keep a close eye on energy costs. According to the Uptime Institute’s 2011 Data Center Survey, 97 percent of respondents said reducing energy use was either “somewhat” or “very” important, and 87 percent said the primary motivation was cost reduction. An Uptime Institute study also found that up to 70 percent of data center energy use is for cooling and air handling, so increasing cooling efficiency is vital to reducing costs. Start with the low-hanging fruit. You’ll be surprised how much you can save with a few simple steps.

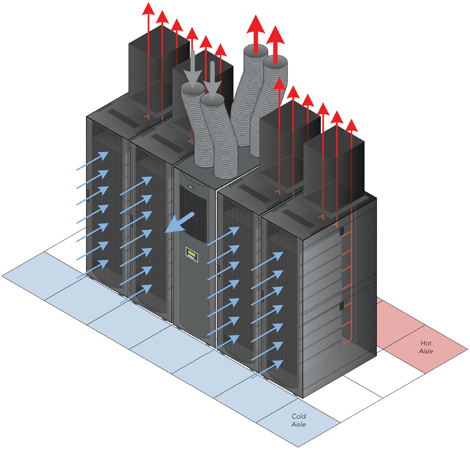

1. Implement Hot-Aisle/Cold-Aisle

You don’t need to keep your data center at meat locker temperatures. Instead, concentrate on removing hot air from the room before it recirculates. Separating hot and cold air is the key to cooling efficiency. Start by arranging racks in rows so the fronts face each other in cold aisles and the backs face each other in hot aisles. That prevents servers from drawing in hot air from servers in the adjacent row. According to studies by TDI Data Centers, hot-aisle/cold-aisle configurations can reduce energy use up to 20%.

2. Install Blanking Panels

Blocking off unused rack spaces isn’t just cosmetic. It forces cold air through your servers and prevents hot air from recirculating through the enclosure. If you look, you can find blanking panels that snap into place without tools, saving significant installation time.

3. Organize Cables

Tangled cables block airflow. They prevent efficient cold air distribution under raised floors and cause heat to build up inside enclosures. In raised-floor environments, move cabling to overhead cable managers. Inside enclosures, use high-capacity cable managers to organize patch cables.

4. Replace Inefficient UPS Systems

Removing unnecessary heat sources helps cool the room. Replace traditional on-line UPS systems with energy-saving models to increase efficiency and reduce heat output, especially where redundant UPS systems operate below full capacity.

5. Use Close-Coupled Cooling

Gartner Group reports that close-coupled cooling increases efficiency compared to traditional perimeter and/or raised floor systems. Close-coupled cooling allows you to focus cooling where it’s needed most without lowering the temperature of the entire room. The modular nature of close-coupled cooling also allows data center managers to quickly reconfigure cooling to handle new equipment or overheating racks.

Some companies offer close-coupled cooling solutions that are completely self-contained and can be installed by IT staff without costly contractors, plumbing, piping, special ductwork, floor drains, water tanks or extra parts.

6. Isolate and Remove Hot Air

Isolate and remove hot air - Combining a hot-aisle/cold-aisle configuration with thermal duct rack enclosures and close-coupled, row-based air conditioning units creates a highly efficient data center cooling solution. (Graphic by Tripp Lite.)

Thermal duct rack enclosures route hot air through an overhead duct to the HVAC or CRAC return air stream. They isolate hot air so it can’t recirculate in the room. Convection forces hot air up through the duct, like a chimney, and positive pressure in the room and negative pressure in the plenum increase airflow.

Industry Perspectives is a content channel at Data Center Knowledge highlighting thought leadership in the data center arena. See our guidelines and submission process for information on participating. View previously published Industry Perspectives in our Knowledge Library.