Hugh Lindsay is the Director of Data Center Software Solutions, Offer & Strategy at Schneider Electric. As the owner of Schneider Electric’s StruxureWare for Data Centers program, Hugh provides vision and guidance to the software development teams across Schneider.

HUGH LINDSAY

HUGH LINDSAYSchneider Electric

For the better part of the last decade, the data center industry has been on an extremely aggressive and accelerated growth path. This whirlwind of expansion has created simultaneous improvements in design and in a whole host of technologies that have enabled dramatic advances in the size, density and reliability of a typical data center today compared to a few short years ago.

Yet, while there have been exceptional advances in the design of a data center and in the technologies that have enabled this growth, significant limitations still exist. The quest to achieve the most productive data center output continues, balanced against competing constraints of data center availability and energy efficiency. More often than not, the barriers are neither technological nor financial, since the underlying technologies already exist and the expectations of return on investment are often met and can be exceeded with the right level of commitment. Instead, the hurdles that need to be overcome stem from disconnections that are related to how information is gathered and shared across organizational boundaries, and to parallel disconnects in business processes between users and teams involved in data center management.

What is Data Center Infrastructure Management (DCIM)?

DCIM is a term used that has been floating around the data center industry for the past few years. It is used to describe a category of management software where the software tools are oriented to the management of the physical data center infrastructure. Management of physical systems like the electrical power network, cooling, networking connections, and even the IT assets such as the servers and storage equipment.

One challenge with DCIM, however, has been that it has suffered from the lack of a succinct and commonly accepted definition. Thankfully, the opinions of data center analysts, the media, software vendors and the end users of these systems is coalescing around a very common understanding of what a DCIM system should be.

That definition of DCIM can be summarized as follows:

“Data center infrastructure management (DCIM) systems collect and manage data about a data center’s assets, resource use and operational status throughout the data center lifecycle. This information is then distributed, integrated, analyzed and applied in ways that help managers meet business and service-oriented goals, and optimize the data center’s performance.”

This definition emphasizes consideration of the physical infrastructure “assets” as well as common software functions like data collection and analysis. Also, it highlights the connections between the software and the data center business processes and services, areas such as uptime, efficiency, and change and capacity management.

DCIM: Gathering, Analyzing and Acting

What is most important to understand here is that, at its core, DCIM is really about collecting and then acting upon information related to the complete physical infrastructure. That information needs to be accurate and relevant to the current state of the data center at any point in time (where time can be measured in years, months, days, hours, minutes, seconds or microseconds) to support accurate and informed decision making. In theory, this should be a simple thing to execute given that all of the technology required to instrument and meter the data center infrastructure and collect data have existed for a very long time.

Information Hurdles Continue

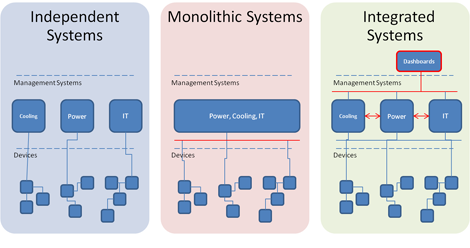

Still, in practice, many DCIM implementations reach an information barrier where it is possible to collect data from any intelligent device in the infrastructure, yet how the tool presents the data is not workable. Either the information is kept in discrete silos to meet the needs of a specific expert user group or, if aggregated into a single “master system,” the software itself lacks the level of detail necessary for any expert user to perform his or her job effectively. A more effective approach would be to leverage open communication protocols and to architect the communication pathways so data can be easily gathered into any expert system and detailed information can be exchanged between systems. Figure 1 is a simplified comparison of the communication architectures of these various DCIM approaches.

Figure 1: A comparison of DCIM system communication architectures

Click for larger image. (Graphic courtesy of Schneider Electric.

Click for larger image. (Graphic courtesy of Schneider Electric.

The integration approach overcomes the information barriers of other past and present approaches and can often leverage the existing investments in instrumentation and systems. This method opens the door to vastly improved opportunities for collaboration between users. Additionally, the inherent modularity of connections between systems allows for additional flexibility for future updates as new functionality is introduced.

Overcoming Informational Barriers: an Integration Approach

Information, in and of itself, is the foundation of balanced decision making, and this is where DCIM software comes into play. Essentially, the ability for a data center operator to capture relevant information about the performance of the data center infrastructure has existed, in large parts, for decades.

For most data centers, the current state of what could be classified as data center infrastructure management are a collections of independent and “closed” monitoring, planning and control systems. Typically, there is at least one software tool for each of the physical sub-systems: electrical, cooling and mechanical, IT infrastructure, IT assets, etc. While this is a great foundation to build on, the disconnects between the various expert users and the information systems each uses can lead to an obvious and acute lack of continuity, and often changes contemplated and implemented may have benefit in one area, but could result in consequential costs in the other areas.

A PUE Scenario

Take, for example, the present emphasis for data center operators to improve their Power Usage Effectiveness (PUE) metric, combined with the concurrent need to reduce energy consumption and lower energy costs. At a surface level, since both goals are intended to improve efficiency, these objectives should be aligned such that an improvement in one area should lead to benefits in the other, but this is not always the case. In many organizations, the group responsible for the IT infrastructure and the PUE metric is not connected with the part of the company that is responsible for paying the electrical bills and the overall energy efficiency targets. Without the proper connections between the two groups, and between the information systems they use to manage their respective domains of expertise it’s possible – and quite common – for the IT infrastructure team to make changes that improve PUE, but lead to an overall increase in energy consumption and costs.

For example, an IT team is seeking to improve PUE from 1.9 to 1.7 and decides that the best approach would simply be to increase the computer room air conditioning (CRAC) outlet temperature by a few degrees. Since PUE is a conversion efficiency metric that compares the energy consumed by the IT assets to the energy delivered by the supporting infrastructure (a PUE of 2 simply means that 2kWh of electricity are required to deliver 1kWh of IT load), and since the bulk of the additional energy delivered is used to create and deliver the cold air to keep the servers cool, increasing the CRAC temperature reduces the supporting infrastructure energy and improves the conversion ratio.

The strategy is a success and the PUE value is indeed improved, but there’s an unintended consequence. With warmer air reaching the servers, the fans in those servers must do more work to keep the servers cool. In this situation, the additional energy consumed by the servers is more than the energy saved with the temperature reduction in the CRACs. Basically, the IT team will meet their metric, but the energy costs for the data center overall will be higher.

The Integrated Approach Scenario

Connecting the users, groups and information systems allows for a more balanced and holistic approach to effective data center infrastructure management.

Given the same PUE / Energy Efficiency scenario, with integrated systems anticipated changes can first be modeled for the potential impact to both PUE and energy efficiency. The IT team can initiate a discussion with the facilities teams to determine an approach that meets the PUE objective, but also considers the consequential impacts on energy consumption and costs. While the CRAC temperature can still be increased, the complete team determines that ducting the heat from servers back to the CRAC units, instead of allowed to mix with the data center air, creates a better balance where the PUE is still reduced but the overall energy use and costs are improved as well.

Integrated DCIM Brings Improved Insight & Decision Support

The PUE example above is just one illustration of the potential benefits of a modular and integrated DCIM solution as a means for active and effective data center management. A complete DCIM implementation can become the key connection point for improved insight and decision support across all data center business processes, including asset management, change and capacity planning, crisis management, energy and resource sustainability, and the ever-present financial management challenges.

As compared to siloed and independent partial systems or a one-size-fits-all monolithic system, the integration approach to DCIM leverages modularity and open data and information connections. It delivers the right information to each expert user as well as provides the opportunities for those users to connect and collaborate and achieve balanced results.

Industry Perspectives is a content channel at Data Center Knowledge highlighting thought leadership in the data center arena. See our guidelines and submission process for information on participating. View previously published Industry Perspectives in our Knowledge Library.