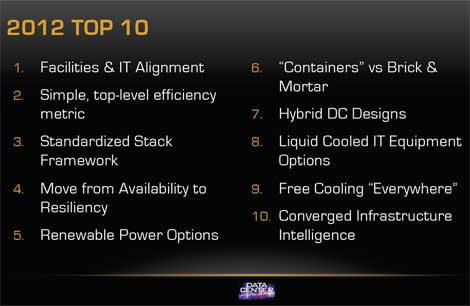

Can IT and facilities work together to make data centers more effective? Will modular designs and renewable energy gain a greater foothold? Those are among the key challenges facing the industry, according to Data Center Pulse, which has released its annual "Top 10" list of priorities for data center users.

The Top 10 was released in conjunction with last week's Data Center Pulse Summit 2012 , which was held along with The Green Grid Technical Forum in San Jose, Calif. The 2012 list features some substantial changes from 2011 and previous years, as end users focus on applying new approaches to data center design and management. Six entries in the Top 10 are new, including the number one challenge. Here's the list:

1. Facilities and IT Alignment: One of the oldest problems in the data center sector is the disconnect between facilities and IT, which effectively separates workloads from cost. This issue is focused on end users rather than vendors, who have traditionally been the intended audience for the Top 10. Large organizations like Microsoft, Yahoo, eBay, Google and Facebook have eliminated many of the bariers between facilities and IT, a trend that needs to penetrate the rest of the industry, Data Center Pulse says.

2. Simple, top-level efficiency metric: Data Center Pulse has proposed a new metric, Service Efficiency, which was reviewed by 50 DCP members during a two-and-a-half hour discussion during the Summit. The proposal is a framework intended to show the actual MPG (miles per gallon) for work done in a data center. details of the metric will be released publicly this summer. For more background, see this video.

3. Standardized Stack Framework: One of Data Center Pulse's key initiatives has been the development of its Data Center Stack, similar to the OSI Model that divides network architecture into seven levels. The standardized Data Center Stack segments operations into seven layers to help align facilities and IT. Version 2.1 has been released, and DCP intends to continue to work with other industry groups like The Green Grid to advance the stack.

4. Move from Availability to Resiliency: With many web applications now spread across multiple facilities or regions, the probability of failure in full systems has become more important than specific site uptime, according to DCP. That means a shift toward focusing on application resiliency, as opposed to the performance of specific buildings. Aligning applications to availability requirements can lowers costs, even as it increases the uptime of a service.

5. Renewable Power Options: The lack of cost-effective renewable power is a growing problem for data centers, which use enormous volumes of electricity. Data Center Pulse sees potential for progress in approaches that have worked in Europe, where renewable power is more readily available than in the U.S. These include focusing business development opportunities at the state level, and encouraging alignment between end users, utilities, government and developers.

6. "Containers" vs. Brick & Mortar: Are containers and modular designs a viable option? As more companies adopt modular approaches, Data Center Pulse notes that "one size does not fit all, but it is fitting quite a bit more." For many companies, the best strategy is likely to be a hybrid approach that combines modular designs with traditional data center space for different workloads.

7. Hybrid DC Designs: The hybrid approach applies to modular designs, but also the Tier system and the redundancy of mechanical and electrical systems. A growing number of data centers are saving money by segmenting facilities into zones with different levels of redundancy appropriate to defined workloads. "Modularity, multi-tier, application resiliency, standardization and supply chain are driving different discussions," notes DCP. "Traditional approach to designing and building data centers may be missing business opportunities."

8. Liquid Cooled IT Equipment Options: For many IT operations, the goal is to increase the amount of work done per watt. That is leading to higher power densities, which is testing the limits of air cooling. As more data centers come to resemble high performance computing (HPC) installations, DCP says direct liquid cooling will become a more attractive option.

9. Free Cooling "Everywhere": The recent Green Grid case study on the eBay Project Mercury data center demonstrates that 100 percent free cooling is possible in places like Phoenix, where the temperature can exceed 100 degrees in the summer. Data Center Pulse says that as data center designers challenge assumptions, new designs and products will support free cooling everywhere.

10. Converged Infrastructure Intelligence: Data center operators now need to treat their infrastructure as a single system, and need to be able to measure and control many elements of their facilities. That means converging the infrastructure instrumentation and control systems, and connecting them to IT systems. Data center infrastructure management (DCIM) is part of this trend, but it will also be important to standardize connections and protocols to connect components, according to Data Center Pulse.