Kevin Wade is director of product marketing at Cyan, which offers packet optical solutions.

KEVIN WADE

KEVIN WADECyan

A recent Uptime Institute survey revealed that 40 percent of data center owners and operators will build a new facility in 2011-2012. For large and mid-sized data center operators, this ongoing expansion to new sites, along with colocation in meet-me rooms, participation in Carrier Ethernet Exchanges and the adoption of cloud-based services are all driving a dramatic rise in inter data center traffic. Expanding WAN bandwidth to keep pace with this growth traditionally involves increasing or adding leased-line capacity for each data center site, but this alternative can be expensive and involve complex negotiations with multiple telecom carriers.

The increasing availability of dark fiber is leading a growing number of operators to deploy their own data center interconnect (DCI) networks. Beyond reducing leased-line costs, or even eliminating them altogether, this approach gives data center operators the strategic advantage of controlling their network from end-to-end, allowing them to manage increasing WAN bandwidth requirements more quickly and efficiently.

For data center operators who choose this path, Ethernet and DWDM are the technologies-of-choice. Ethernet is preferred due to its overall familiarity and interoperability with the data center LAN. DWDM is favored because it optimizes utilization of available dark fiber(s). From a design perspective, the typical Ethernet over DWDM network architecture combines separate Ethernet switch/router and optical transport platforms at each site to build the data center interconnect fabric.

A number of inefficiencies make this network design less than optimal for interconnecting data centers. For one, basic layer 2 VLAN switching on Ethernet switch/routers doesn’t deliver the scale, reliability or line-rate performance required for mission-critical DCI networks. Further, optical transport platforms designed for deployment by telecom carriers can often be complex to deploy and to operate. Finally, using two separate physical platforms makes management of the data center interconnect network, and the provisioning of capacity across the optical and Ethernet layers, a complicated and time-consuming process.

Connection-Oriented Ethernet – Optimized for Data Centers

A high-performance implementation of Ethernet technology referred to as connection-oriented Ethernet (COE) is optimized for meeting the scalability and resiliency requirements of data center interconnect networks. Widely used by carriers and other network service providers today, COE is an evolution of traditional Ethernet and VLAN standards that addresses recognized scalability limitations and introduces traffic engineering capabilities to make the technology suitable for use in large, distributed networks. COE is based on a combination of several inter-related standards, including:

- 802.1ah (also known as PBB or “Mac-in-Mac”) solves scaling issues with traditional Ethernet MAC tables sizes and VLAN limitations by encapsulating MAC addresses within MAC addresses to enable support for up to 16.7 million individual services

- IEEE 802.1Qay (also known as PBB-TE) adds traffic engineering capabilities to Ethernet to enable the provisioning of explicit connections that provide deterministic bandwidth and guaranteed QoS at each node along a defined path

- IETF MPLS-TP (Multiprotocol Label Switching – Transport Profile), a developing standard that simplifies the MPLS protocol to serve as a transport network technology and enable convergence with multiservice IP/MPLS core networks

- ITU-T G.8031/G.8032 provides carrier-grade Ethernet protection switching functionality equivalent to that provided by SONET (50 ms or less) over linear, ring, or multi-ring network topologies

- Ethernet OAM (Operations, Administration & Maintenance) standards, including IEEE 802.3ah, IEEE 802.1ag and ITU-T Y.1731, which enable improved performance monitoring and fault management at the Ethernet service and link levels

The key objective of these COE standards has been to transform Ethernet into a transport technology and overcome the limitations found with traditional Ethernet switching and routing. By implementing Ethernet-centric traffic engineering and protection, COE allows data center operators to provision resilient, large-scale layer 2 domains across the DCI network and eliminate the need to use more complex layer 3 and/or MPLS protocols for traffic engineering, service isolation or service recovery. The predictable bandwidth and QoS, sub-50 millisecond (ms) protection switching and end-to-end performance monitoring and management provided by COE make the technology ideally suited for data center interconnect networks.

Packet + Optical for Scale and Cost-Efficiency

COE technology is implemented in a new class of “packet-optical” transport platform (P-OTP). As their name implies, P-OTPs combine Ethernet (packet) and optical capabilities in a single system. In addition to COE, the packet layer functionality provided by P-OTPs includes line-rate, fully non-blocking 1/10 GbE layer 2 switching across all ports. The optical layer functionality provided by P-OTPs includes DWDM transport, for low-cost point-to-point applications over distances of up to 180 km per span, as well integrated multi-degree reconfigurable optical add-drop multiplexer (ROADM) capabilities for network topology flexibility.

Through this integration, P-OTPs allow data center operators to maximize fiber scalability for high-density, ultra low latency Ethernet transport. Further, because P-OTPs collapse Ethernet and optical layer functions in a single system, space and power consumption is considerably lower than using separate Ethernet switch/router and optical transport platforms.

Tying it All Together With Multi-layer Management

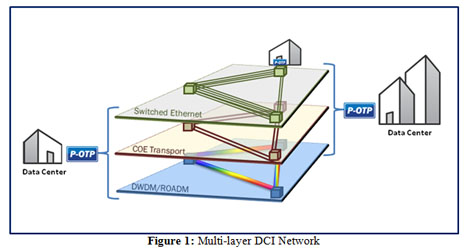

DCI networks consist of multiple independent network layers, each of which plays a role in determining end-to-end performance. Depending on the operator, the network can include a physical fiber layer and rise up through the DWDM, TDM/SONET, COE and Ethernet switching layers, as illustrated in Figure 1. Historically, each of these layers has been managed and operated independently, typically through different element management systems that have no awareness of adjacent layers or network resources. The resulting management complexity leads to operational inefficiencies for data center operators such as delayed resolution of network issues and poor utilization of network resources, and ultimately higher costs.

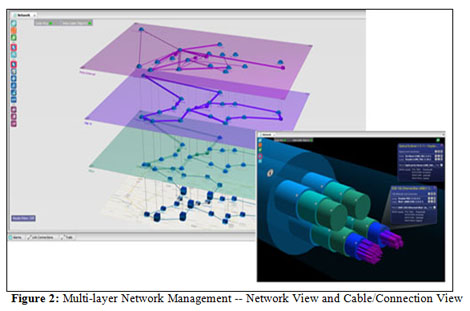

Revolutionary new multi-layer management systems simplify the implementation, monitoring and operation of DCI networks that utilize P-OTPs. Multi-layer network management leverages object-based intelligence that provides awareness of network resources such as switching/transport nodes, connections, and the various network layers, as well as their inter-dependencies. Advanced 3-D visualization also optimizes operational efficiency by delivering intuitive, graphical views of the network to accelerate capacity provisioning and simplify fault correlation across multiple layers, as shown in Figure 2.

Improved Space and Efficiency Coupled with Scalability & Resiliency

Multi-layer network management systems, coupled with P-OTPs that integrate connection-oriented Ethernet and DWDM/ROADM capabilities, provide an ideal solution for data center operators to deploy and manage their own high-capacity data center interconnect networks. In addition to providing significant scalability and resiliency advantages over the typical DCI network design that uses separate routing and optical transport platforms, the packet-optical DCI approach offers improved space and power efficiency, operational simplicity, and performance across multiple network layers.

Industry Perspectives is a content channel at Data Center Knowledge highlighting thought leadership in the data center arena. See our guidelines and submission process for information on participating. View previously published Industry Perspectives in our Knowledge Library.