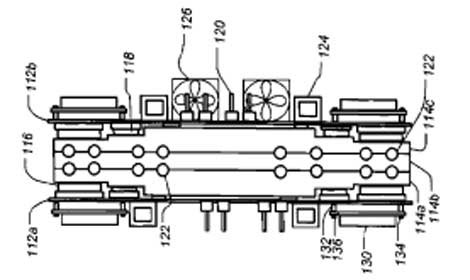

A diagram from a Google patent showing a cross-section of a design for a liquid-cooled server assembly featuring a heat sink with motherboards on either side.

Has Google adopted liquid cooling for its servers? The company isn't saying, but it has patented a design for a "server sandwich" in which two motherboards are attached to either side of a liquid-cooled heat sink.

The design is among a number of Google patents on new cooling techniques for high-density servers that have emerged since the company's last major disclosure of its data center technology in April 2009. Several of these patents deal with cooling innovations using either liquid cooling or air cooling applied directly on server components.

Google's patents run the gamut of data center technologies, and it’s rarely clear which are conceptual and which have actually been implemented in Google’s data centers. But the liquid cooling patent shows that Google is focused on packing even more computing power into the racks in its data centers, and looking beyond air cooling to solve its design challenges. The patent also envisions the use of chillers, which Google has been seeking to minimize or eliminate in some recent designs.

Custom Motherboards, Heat Sinks

Google has customized much of the operation of its data centers, which serve as the engines powering its massive Internet business. Google builds its own servers and networking switches, as well as the racks and containers that hold them. This allows the company to develop custom solutions for data center design problems.

The liquid cooling design patented by Google features custom motherboards with components attached to both sides. Heat-generating processors are placed on the side of the motherboard that comes in contact with the heat sink, which is an aluminum block containing tubes that carry cooling fluid. Components that produce less heat, like memory chips, are placed on the opposite side of the motherboard, adjacent to fans that provide air cooling for these components.

Motherboards are attached to either side of the heat sink, creating a "server sandwich" assembly that can be housed in a rack. Diagrams submitted with the patent depict cabinets filled with 10 of these liquid-cooled assemblies, suggesting each takes up 4U in a rack.

Similar to Cold Plate

The heat sink is similar to a cold plate, which can cool heat loads of up to 80 kilowatts per rack in some implementations. Google's patent says the heat sink could be configured to use either chilled water or a liquid coolant.

Increasing the density of a rack can allow data center operators to pack more computing power into each facility, creating a more efficient infrastructure and reducing the cost and pace of data center expansion.

Liquid cooling is common in supercomputing and high performance computing (HPC), where facility operators manage computing clusters producing high heat loads. Rising heat densities have spurred predictions that liquid cooling would be more widely adopted, but some data center managers remain wary of having water near their equipment.

In recent years, companies running the largest search engines and cloud computing infrastructures have focused on getting the most mileage out of air cooling, using fresh air economizers and raising the temperature in their data centers. Google was an early adopter of these techniques.

Google's patent for Motherboards with Integrated Cooling was submitted in late 2006 and approved last July, and lists Google employees Jimmy Clidaras and Winnie Leung as the inventors. Clidaras is Google's Director of Data Center Research and Development, while Leung is the company's Mechanical Engineering Manager.

Focus on Closely-Coupled Cooling

Clidaras and Leung previously collaborated on a patent for a cooling system for a rack cooling design featuring a series of "air wands," adjustable pipes that provide small amounts of cold air to components within a server tray. The chilled air enters the top of a rack through two vertical standpipes, which branch off into air wands – long, thin pipes lined with vents that release cold air. See A Closer Look at Google’s New Cooling Design for more.

Google has used data center containers to isolate hot and cold air and gain greater control over airflow to its servers. Some of its container-based designs date to 2006, and may not reflect the current state of Google's thinking on data center design. Recent patents submitted by Google and its affiliates include some designs featuring containers and others optimized for a traditional raised-floor environment.

FURTHER READING: